You got your demoscene program, but how about the source code of a beamracing hello world program?

It is the same thing. The separate screenfuls are on average 100 lines (the draggable tearline, the bouncing color bars, the Kefrens Bars) are only approximately 100 lines each after blackboxing a few thousand lines into a core easy-to-use beam racing “library” (classes for now, but it could be ported/rolled into a library later).

For the demoscene style modules (which are actually great beam racing sandboxes for beginners too, being C#) – There are modules that does different things such as the mouse-dragged tearlines – that mouse draggable tearline is about 100 lines approximately.

Due to demoscene rules of most of the exhibits, I can’t release any /portion/ of the code until it’s exhibited, even if I fork the core part.

If I don’t submit to a demoscene, then I can release pretty much immediately. If I do then I have to release code afterwards.

I’ll make a decision during July (which then declares the release date of Tearline Jedi) – keep tuned.

More than 75% of people I asked told me to save it for demoscene event first, so that is leaning that way. It’s an audacious abuse of high-framerate VSYNC OFF to real time rasters so has already surprised a few – and even more when I told them it’s just an easy Nerf language (C#) running off an opensource cross platform game engine (MonoGame C#) doing it instead of timing-critical assembly. Turning beam racing into child’s play, essentially.

It would be lovely to see this get displayed on the big screen at Assembly 2018 in front of that large audience, but let’s see.

However, early private invites to git repo for co-develop is fine – if you want to help me improve this demo for a demoscene submission – contact me at [email protected] … This is allowed according to rules if you privately want to see the source code now (As long as you don’t utilize it just yet into other software till it’s exhibited).

Ideas & alternate solutions are welcome… Generally, I want to broadcast this idea a little farther and wider. Appreciated!

So, if I understand this correctly, it would benefit enormously from a single buffering pipeline, right? Because then you wouldn’t have to call Present() or SwapBuffers() or whatever some thousands times a second.

Actually, I’d like to see if there is a difference in peeformance with something like Linux KMS/DRM, which allows for true single buffering compared to Windows.

Yes, it would benefit enormously with a front buffer – you could just simply render single pixel rows directly to the front buffer a few scanlines ahead of the real raster. The beam racing margin could be adjustable in that case.

But without access to that, we still can at least coarsely simulate front buffer rendering via frameslicing.

You don’t necessarily need thousands of frameslices per second – only Kefrens Bars requires that – it’s only for “Atari TIA style” realtime generation of pixel rows. That, indeed, does require thousands of Presents() a second for realtime generated “pixel rows”.

However, that is not the beamracing workflow for emulators.

For the emulator POV, it is very scaleable.

- 1 frameslice per refresh cycle - 60 Presents() or glutSwapBuffers() per second of full screenfuls

- 2 frameslice per refresh cycle - 120 Presents() or glutSwapBuffers() per second of 1/2 screenfuls

- 3 frameslice per refresh cycle - 180 Presents() or glutSwapBuffers() per second of 1/3 screenfuls

- 4 frameslice per refresh cycle - 240 Presents() or glutSwapBuffers() per second of 1/4 screenfuls

etc.

So 10 frameslice per refresh cycle - 600 Presents() or glutSwapBuffers() per second of 1/10 screenfuls. Input lag, if using the tight jittermargin technique is between 1 and 2 frameslices worth. So 600 frameslices per second is as little as 1/10th a frame input lag.

All raster-timed of course (obviously, the 1-frameslice case is simply just racing the VBlank…). Since you’d know where the realraster is, you can just render only the frameslice, rather than the whole framebuffer, and present it to the appropriate area of the screen.

I might be able to raise my frameslice rate to over >10,000 frameslices per second that way, but the MonoGame engine doesn’t let me get that low level. However, Direct3D/OpenGL may have some arguments that allow you to blit your offscreen buffer only to the specific region of the onscreen buffer, saving memory bandwidth of uselessly blitting the areas that’s outside of the current raster. This may be for some future experimentation to do.

For Tearline Jedi, I’m keeping it lowest common denominator – all it requires is a tearlines-supported API – but there are indeed many optimizations possible.

While RunAhead is really nice, this is a useful additional tool in the lag-reduction toolbox for the “right tool for right job” situations. It’s more original-latency reproducing And it is more Arduino-friendly than RunAhead technique, at the low frameslice counts. This technique is already being done for certain Android VR apps -for 4-frameslice beam-racing

I could be wrong, but what I think @Dwedit means is that he would just like to start working on an implementation for RetroArch for this idea instead of stalling this out for months on end and having to wait on some demo to drop first. I can kinda understand where he is coming from too if that is the case.

Fair comment (Liked).

Lemme see how we can accelerate things. I’ll spend a couple hours to write this proposal post to see if this is a good plan:

Some considerations:

- The demo testing will help vet out problems/bugs.

- The code is in C# which is not the same language as LibRetro.

- Porting the core libraries to C or C++ was going to be a later project, or someone to volunteer.

So, need to figure out which is faster:

- Wait for me to release source code (or gain private access to my git repo)

- Use it as a private sandbox to learn frameslice beamracing

- Write LibRetro frameslice beamracing from scratch

Or

- Bootstrap by learning from WinUAE codebase which already has frameslice beamracing

- Use it as an educational sandbox

- Write LibRetro frameslice beamracing from scratch

Or

- Wait (longer) for the C/C++ ports of the raster calculator modules

- Use it directly within LibRetro frameslice beamracing.

From these approaches, some elements need to be written from scratch, as some LibRetro pre-requisites independently of all the above. Let me see if I can suggest a blueprint of how to proceed…

Recommended Hook

- Add the per-raster callback function called “retro_set_raster_poll”

- The arguments are identical to “retro_set_video_refresh”

- Do it to one emulator module at a time (begin with the easiest one).

It calls the raster poll every emulator scan line plotted. The incomplete contents of the emulator framebuffer (complete up to the most recently plotted emulator scanline) is provided. This allows centralization of frameslice beamracing in the quickest and simplest way.

Getting the VSYNC timestamps

This technique is only needed for the register-less method, to listen for VSYNC timestamps while in VSYNC OFF mode, and to poll the raster line:

- Get your primary display adaptor URL such as \.\\DISPLAY1 … For me in C#, I use Screen.PrimaryScreen.DeviceName to get this, but in C/C++ you can use EnumDisplayDevices() …

- Next, call D3DKMTOpenAdapterFromHdc() with this info to open the hAdaptor handle

- For listening to VSYNC timestamps, run a thread with D3DKMTWaitForVerticalBlankEvent() on this hAdaptor handle. Then immediately record the timestamp. This timestamp represents the end of a refresh cycle and beginning of VBI. That’s your VSYNC callback signal.

Other platforms have various methods of getting a VSYNC event hook (e.g. Mac CVDisplayLinkOutputCallback) which roughly corresponds to the Mac’s blanking interval. If you are using the registerless method and generic precision clocks (e.g. RTDSC wrappers) these can potentially be your only #ifdefs in your cross platform beam racing – just simply the various methods of getting VSYNC timestamps. The rest have no platform-specificness.

Getting the current raster scan line number

For raster calculation you can do one of the two:

(A) Raster-register-less-method: Use QueryPerformanceCounter to profile the times between refresh cycle. You can use known fractional refresh rate (from QueryDisplayConfig) to bootstrap this “best-estimate” refresh rate calculation, and refine this in realtime. Calculating raster position is simply a relative time between two VSYNC timestamps, allowing 5% for VBI (meaning 95% of 1/60sec for 60Hz would be a display scanning out). NOTE: Optionally, to improve accuracy, you can dejitter. Use a trailing 1-second interval average to dejitter any inaccuracies (they calm to 1-scanline-or-less raster jitter), ignore all outliers (e.g. missed VSYNC timestamps caused by computer freezes). Alternatively, just use jittermargin technique to hide VSYNC timestamp inaccuracies.

(B) Raster-register-method: Use D3DKMTGetScanLine to get your GPU’s current scanline on the graphics output. Wait at least 1 scanline between polls (e.g. sleep 10 microseconds between polls), since this is an expensive API call that can stress a GPU if busylooping on this register.

NOTE: If you need to retrieve the “hAdaptor” parameter for D3DKMTGetScanLine – then get your adaptor URL such as \.\\DISPLAY1 via EnumDisplayDevices() … Then call D3DKMTOpenAdapterFromHdc() with this adaptor URL in order to open the hAdaptor handle which you can then finally pass to D3DKMTGetScanLine that works with Vulkan/OpenGL/D3D/9/10/11/12+ … D3DKMT is simply a hook into the hAdaptor that is being used for your Windows desktop, which exists as a D3D surface regardless of what API your game is using, and all you need is to know the scanline number. So who gives a hoot about the “D3DKMT” prefix, it works fine with beamracing with OpenGL or Vulkan API calls. (KMT stands for Kernel Mode Thunk, but you don’t need Admin priveleges to do this specific API call from userspace.)

Improved VBI size monitoring

You don’t need raster-exact precision for basic frameslice beamracing, but knowing VBI size makes it more accurate to do frameslice beamracing since VBI size varies so much from platform to platform, resolution to resolution. Often it just varies a few percent, and most sub-millisecond inaccuracies is easily hidden within jittermargin technique.

But, if you’ve programmed with retro platforms, you are probably familiar with the VBI (blanking interval) – essentially the overscan space between refresh cycles. This can vary from 1% to 5% of a refresh cycle, though extreme timings tweaking can make VBI more than 300% the size of the active image (e.g. Quick Frame Transport tricks – fast scan refresh cycles with long VBIs in between). For cross platform frameslice beamracing it’s OK to assume ~5% being the VBI, but there are many tricks to know the VBI size.

- QueryDisplayConfig() on Windows will tell you the Vertical Total. (easiest)

- Or monitoring the ratio of .INVBlank = true versus .INVBlank = false … (via D3DKMTGetScanLine) by monitoring the flag changes (wait a few microseconds between polls, or 1 scanline delay – D3DKMTGetScanLine is an ‘expensive’ API call)

Turning The Above Data into Real Frameslice Beamracing

For simplicity, begin with emu Hz = real Hz (e.g. 60Hz)

- Have a configuration parameter of number of frameslices (e.g. 10 frameslices per refresh cycle)

- Let’s assume 10 frameslices for this exercise.

- Actual screen 1080p means 108 real pixel rows per frameslice.

- Emulator screen 240p means 24 emulator pixel rows per frameslice.

- Your emulator module calls the centralized raster poll (retro_set_raster_poll) right after every emulator scan line. The centrallized code (retro_set_raster_poll) counts the number of emulator pixel rows completed to fill a frameslice. The central code will do either (5a) or (5b): (5a) Returns immediately to emulator module if not yet a full new framesliceful have been appended to the existing offscreen emulator framebuffer (don’t do anything to the partially completed framebuffer). Update a counter, do nothing else, return immediately. (5b) However once you’ve got a full frameslice worth built up since the last frameslice presented, it’s now time to frameslice the next frameslice. Don’t return right away. Instead, immediately do an intentional CPU busyloop until the realraster reaches roughly 2 frameslice-heights above your emulator raster (relative screen-height wise). So if your emulator framebuffer is filled up to bottom edge of where frameslice #4 is, then do a busyloop until realraster hits the top edge* of frameslice #3. Then immediately Present() or glutSwapBuffers() upon completing busyloop. Then Flush() right away. NOTE: The tearline (invisible if unchanged graphics at raster are) will sometimes be a few pixels below the scan line number (the amount of time for a memory blit - memory bandwidth dependant - you can compensate for it, or you can just hide any inaccuracy in jittermargin) NOTE2: This is simply the recommended beamrace margin to begin experimenting with: A 2 frameslice beamracing margin is very jitter-margin friendly.

Note: 120Hz scanout diagram from a different post of mine. Replace with emu refresh rate.matching real refresh rate, i.e. monitor set to 60 Hz instead. This diagram is to help raster veterans conceptualize how modern-day tearlines relates to raster position as a time-based offset from VBI

Bottom line: As long as you keep repeatedly Present()-ing your incompletely-rasterplotted (but progressively more complete) emulator framebuffer ahead of the realraster, the incompleteness of the emulator framebuffer never shows glitches or tearlines. The display never has a chance to display the incompleteness of your emulator framebuffer, because the display’s realraster is showing only the latest completed portions of your emulator’s framebuffer. You’re simply appending new emulator scanlines to the existing emulator framebuffer, and presenting that incomplete emulator framebuffer always ahead of real raster. No tearlines show up because the already-refreshed-part is duplicate (unchanged) where the realraster is. It thusly looks identical to VSYNC ON.

Precision Assumptions:

- Scaling doesn’t have to be exact.

- The two frameslice offset gives you a one-frameslice-ahead jitter margin

- You can vary the height of consecutive frameslices if you want, slightly, or lots, or for rounding errors.

- No artifacts show because the frameslice seams are well into the jitter margin.

Special Note On HLSL-Style Filters: You can use HLSL/fuzzyline style shaders with frameslices. WinUAE just does a full-screen redo on the incomplete emu framebuffer, but one could do it selectively (from just above the realraster all the way to just below the emuraster) as a GPU performance-efficiency optimization.

Adverse Conditions To Detect To Automatically disable beamracing

Optional, but for user-friendly ease of use, you can automatically enter/exit beamracing on the fly if desired. You can verify common conditions such as making sure all is me:

- Rotation matches (scan direction same) = true

- Supported refresh rate = true

- Module has a supported raster hook = true

- Emulator performance is sufficient = true

Exiting beamracing can be simply switching to “racing the VBI” (doing a Present() between refresh cycles), so you’re just simulating traditional VSYNC ON via VSYNC OFF via that manual VSYNC’ing. This is like 1-frameslice beamracing (next frame response). This provides a quick way to enter/exit beamracing on the fly when conditions change dynamically. A Surface Tablet gets rotated, a module gets switched, refresh rate gets changed mid-game, etc…

General Best Practices

Debugging raster problems can be frustrating, so here’s knowledge by myself/Calamity/Toni Wilen/Unwinder/etc. These are big timesaver tips:

- Raster error manifests itself as tearline jitter.

- If jitter is within raster jittermargin technique, no tearing or artifacts shows up.

- It’s an amazing performance profiling tool; tearline jitter makes your performance fluctuations very visible. In debug mode, use color-coded tints for your frameslices, to help make normally-hidden raster jitter more visible (WinUAE uses this technique).

- Raster error is more severe at top edge than bottom edge. This is because GPU is more busy during this region (e.g. scheduled Windows compositing thread, stuff that runs every VSYNC event in the Windows Kernel, etc). It’s minor, but it means you need to make sure your beam racing margin accomodate sthis.

- GPU power management. If your emulator is very light on a powerful GPU, your GPU fluctuating power management will amplify raster error. Which may mean having too frameslices will have amplified tearline jitter. Fixes include (A) configure more frameslices (B) simply detect when GPU is too lightly loaded and make it busy one way or another (e.g. automatically use more frameslices). The rule of thumb is don’t let GPU idle for more than a millisecond if you want scanline-exact rasters. Or you can just merely simply use a bigger jittermargin to hide raster jitter.

- If you’re using D3DKMTGetScanLine… do not busyloop on it because it stresses the GPU. Do a CPU busyloop of a few microseconds before polling the raster register again.

- Do a Flush() before your busyloop before your precision-timed Present(). This massively increases accuracy of frameslice beamracing. But it can decrease performance.

- Thread-switching on some older CPUs can cause RTDSC or QueryPerformanceCounter backwards clock ticking unexpectedly. So keep QueryPerformanceCounter polls to the same CPU thread with a BeginThreadAffinity. You probably already know this from elsewhere in the emulator, but this is mentioned here as being relevant to beamracing.

- Instead of rasterplotting emulator scanlines into a blank framebuffer, rasterplot on top of a copy of the the emulator previous refresh cycle’s framebuffer. That way, there’s no blank/black area underneath the emulator raster. This will greatly reduce visibility of glitches during beamrace fails (falling outside of jitter margin – too far behind / too far ahead) – no tearing will appear unless within 1 frameslice of realraster, or 1 refresh cycle behind. A humongous jitter margin of almost one full refresh cycle. And this plot-on-old-refresh technique makes coarser frameslices practical – e.g. 2-frameslice beamracing practical (e.g. bottom-half screen Present() while still scanning out top half, and top-half screen Present() while scanning out bottom half). When out-of-bounds happens, the artifact is simply brief instantaneous tearing only for that specific refresh cycle. Typically, on most systems, the emulator can run artifactless identical looking to VSYNC ON for many minutes before you might see brief instantaneous tearline from a momentary computer freeze, and instantly disappear when beamrace gets back in sync.

- Some platforms supports microsecond-accurate sleeping, which you can use instead of busylooping. Some platforms can also set the granularity of the sleep (there’s an undocumented Windows API call for this). As a compromise, some of us just do a normal thread sleep until a millisecond prior, then doing a busyloop to align to the raster.

- Don’t worry about mid-scanline splits (e.g. HSYNC timings). We don’t have to worry about such sheer accuracy. The GPU transceiver reads full pixel rows at a time. Being late for a HSYNC simply means the tearline moves down by 1 pixel. Still within your raster jitter margin. We can jitter quite badly when using a forgiving jitter margin – (e.g. 100 pixels amplitude raster jitter will never look different from VSYNC ON). Precision requirement is horizontal scanrate (e.g. 67KHz means 1/67000sec precision needed for scanline-exact tearlines – which is way overkill for 10-frameslice beamracing which only needs 1/600sec precision at 60Hz).

- Use multimonitor. Debugging is way easier with 2 monitors. Use your primary is exclusive full screen mode, with the IDE on a 2nd monitor. (Not all 3D frameworks behave well with that, but if you’re already debugging emulators, you’ve probably made this debugging workflow compatible already anyway). You can do things like write debug data to a console window (e.g. raster scanline numbers) when debugging pesky raster issues.

- Some digital display outputs exhibit micropacketization behavior (DisplayPort at lower resolutions especially, where multiple rows of pixels seem to squeeze into the same packet – my suspicion). So your raster jitter might vibrate in 2 or 4 scan line multiples rather than single-scanline multiples. This may or may not happen more often with interleaved data (DisplayPort cable handling 2 displays or other PCI-X data) but they are still pretty raster-accurate otherwise, the raster inaccuracies are sub-millisecond, and fall far within jitter margin. Advanced algorithms such as DSC (Display Stream Compression of new DisplayPort implementations) can amplify raster jitter a bit. But don’t worry; all known micro-packetization inaccuracies, fall far well within jittermargin technique, so no problem. I only mention this is you find raster-jitter differences between different video outputs.

- Become more familiar with how the jitter-margin technique saves your ass. If you do Best-Practice #9, you gain a full wraparound jittermargin (you see, step #9 allows you to Present() the previous refresh cycle on bottom half of screen, while still rendering the top half…). If you use 10 frameslices at 1080p, your jitter safety margin becomes (1080 - 108) = 972 scanlines before any tearing artifacts show up! No matter where the real raster is, you’re jitter margin is full wraparound to previous refresh cycle. The earliest bound is pageflip too late (more than 1 refresh cycle ago) or pageflip too soon (into the same frameslice still not completed scanning-out onto display). Between these two bounds is one full refresh cycle minus one frameslice! So don’t worry about even a 25 or 50 scanline jitter inaccuracy (erratic beamracing where margin between realraster and emuraster can randomly vary) in this case… It still looks like VSYNC ON perfectly until it goes out of that 972-scanline full-wraparound jitter margin. For minimum lag, you do want to keep beam racing margin tight (you could make beamrace margin adjustable as a config value, if desired – though I just recommend “aim the Present() at 2 frameslice margin” for simplicity), but you can fortunately surge ahead slightly or fall behind lots, and still recover with zero artifacts. The clever jittermargin technique that permanently hides tearlines into jittermargin makes frameslice beam-racing very forgiving of transitient background activity._

- Get familiar with how it scales up/down well to powerful and underpowered platforms. Yes, it works on Raspberry PI. Yes, it works on Android. While high-frameslice-rate beamracing requires a powerful GPU, especially with HLSL filters, low-frameslice beamracing makes it easier to run cycle-exact emulation at a very low latency on less powerful hardware - the emulator can merrily emulate at 1:1 speed (no surge execution needed) spending more time on power-consuming cycle-exactness or ability to run on slower mobile GPUs. You’re simply early-presenting your existing incomplete offscreen emulator framebuffer (as it gets progressively-more-complete). Just adjust your frameslice count to an equilibrium for your specific platform. 4 is super easy on the latest Androids and Raspberry PI (Basically 4 frameslice beam racing for 1/4th frame subrefresh input lag – still damn impressive for a PI or Android) while only adding about 10% overhead to the emulator.

- If you are on a platform with front buffer rendering (single buffer rendering), count yourself lucky. You can simply rasterplot new pixel rows directly into the front buffer instead of keeping the buffer offscreen (As you already are)! And plot on top of existing graphics (overwrite previous refresh cycle) for a jitter margin of a full refresh cycle minus 1-2 pixel rows! Just provide config parameter of of beamrace margin (vertical screen height percentage difference between emuraster + realraster), to adjust tightness of beamracing. You can support frameslicing VSYNC OFF technique & frontbuffer technique with the same suggested API, retro_set_raster_poll suggestion – it makes it futureproof to future beamracing workflows.

- Yes, it works with curved scanlines in HLSL/filter type algorithms. Simply adjust your beamracing margin to prevent the horizontally straight realraster from touching the top parts of curved emurasters. Usually a few pixel rows will do the job. You can add a scanlines-offset-adjustment parameter or a frameslice-count-offset adjustment parameter.

- Temporarily turn off debug output when programming/debugging real world beam racing. When running in debug mode, create your own built-in graphics console overlay, not a separate console window – don’t use debug console-writing to IDE or separate shell window during beam racing. It can glitch massively if you generate lots of debug output to a console window. Instead, display debug text directly in the 3D framebuffer instead and try to buffer your debug-text-writing till your blanking interval, and then display it as a block of text at top of screen (like a graphics console overlay). Even doing the 3D API calls to draw a thousand letters of text on screen, will cause far less glitches than trying to run a 2nd separate window of text (IDE debug overheads & shell window overheads) can cause massive beam-racing glitches if you try to output debug text – some Debug output commands can cause >16ms stall – I suspect that some IDE’s are programmed in garbage-collected language and sometimes the act of writing console output causes a garbage-collect event to occur. Or some other really nasty operating-system / IDE environment overheads. So if you’re running in debug mode while debugging raster glitches, then temporarily turn off the usual debug output mechanism, and output instead as a graphics-text overlay on your existing 3D framebuffer. Even if it means redundant re-drawing of a line of debugging text at the top edge of the screen every frame.

Hopefully these best practices reduce the amount of hairpulling during frameslice beamracing.

Special Notes

-

Special Note about Rotation Emulator devices already should report their screen orientation (portrait, landscape) which generally also defines scan direction. QueryDisplayConfig() will tell you real screen orientation. Default orientation is always top-to-bottom scan on all PC/Mac GPUs. 90 degree counterclockwise display rotation changes scan direction into left-to-right. If emulating Galaxian, this is quite fine if you’re rotating your monitor (left-right scan) and emulating Galaxian (left-right scan) – then beamracing works._

-

Special Note about Unsupported Refresh Rates Begin KISS and worry about 50Hz/60Hz only first. Start easy. Then iterate in adding support to other refresh rates like multiples. 120Hz is simply cherrypicking every other refresh cycle to beam race. For the in-between refresh cycles, just leave up the existing frame up (the already completed frame) until the refresh cycle that you want to beamrace is about to begin. In reality, there’s very few unbeamraceable refresh rates – even beamracing 60fps onto 75Hz is simply beamracing cherrypicked refresh cycles (it’ll still stutter like 60fps@75Hz VSYNC ON though)._

-

Advanced Note about VRR Beam Racing Before beam racing variable refresh rate modes (e.g. enabling GSYNC or FreeSync and then beamracing that) – wait until you’ve mastered all the above before you begin to add VRR compatibility to your beamracing. So for now, disable VRR when implementing frameslice beamracing for the first time. Add this as a last step once you’ve gotten everything else working reasonably well. It’s easy to do once you understand it, but the conceptual thought of VRR beamracing is a bit tricky to grasp at first. VRR+VSYNC OFF supports beamracing on VRR refresh cycles. The main considerations are, the first Present() begins the manually-triggered refresh cycle (.INVBlank becomes false and ScanLine starts incrementing), and you can then frameslice beamrace that normally like an individual fixed-Hz refresh cycle. Now, one additional very special, unusual consideration is the uncontrolled VRR repeat-refresh. One will need to do emergency catchup beamraces on VRR displays if a display decides to do an uncommanded refresh cycle (e.g. when a display+GPU decides to do a repeat-refresh cycle – this often happens when a display’s framerates go below VRR range). These uncommanded refresh cycles also automatically occur below VRR range (e.g. under 30fps on a 30Hz-144Hz VRR display). Most VRR displays will repeat-refresh automatically until it’s fully displayed an untorn refresh cycle. If this happens and you’ve already begun emulating a new emulator refresh cycle, you have to immediately start your beamrace early (rather than at the wanted precise time). So if you do a frameslice beamrace of a VRR refresh cycle, the GPU will send a repeat-refresh to the display automatically immediately. There might be an API call to suppress this behavior, but we haven’t found one, so this behavior is unwanted so this kind of makes beamraced 60fps onto a 75Hz FreeSync display difficult to do stutter-free. But it works fine for 144Hz VRR displays - we find it’s easy to be stutterfree when the VRR max is at least twice the emulator Hz, since we don’t care about those automatic-repeat-refresh cycles that aren’t colliding with timing of the next beamrace._

Is this sufficient QuickStart on quickly rapidly getting started with RetroArch frameslice beamracing?

At the very least the 2 hours I spent writing this post, for you – hopefully can help you possibly achieve experimental test 60Hz beamracing within 2 or 3 day’s of programming?

(Details may take longer, e.g. debugging VRR beamrace support – but 60Hz frame slice beamracing is typically “easier-than-expected” to add according to two other emulator authors – Tony Wilen of WinUAE told me that)

I’ll be able to provide more snippets, examples, suggestions, and snippets of source code (without violating demo rules – and besides, this way is probably faster and more C/C++ useful anyway) – here – or if prefer email, contact me [email protected] … I got some C/C++ test code from Jerry of Duckware, the inventor of vsynctester.com that has a working example of D3DKMTGetScanLine() in .cpp modules, if you’re still having difficulty with the instructions above.

Moving Forward

Before utilizing any existing code (e.g. WinUAE or Tearline Jedi or anything else) – I think the first priority is to blueprint it out, decide how to extend RetroArch API. I propose add – retro_set_raster_poll as described… what do you think? Something with the least pain to add. The raster poll technique is probably a move we have to do regardless.

The hooking technique will have a huge impact on how we decide to frameslice-beamrace, and how flexible it can be made.

Did my post help? Need some code examples by email?

Hello - first time here, quick read through this and I see you’ve done some really good work.

My main thought from reading just a little here is that a lot of the latency seems to be tied up, in some sense, in things dependent on the frame rate.

That is, suppose you slow the emulator to half speed: some of the latency sources will remain unaffected (USB polling, kernel overhead, etc) whereas other sources will, surprisingly, double in latency (libretro slowdown).

A pretty easy way to see this is to use the aforementioned “frame advance” technique, where it can be seen that some of the latency sources will take 2-4 frames – no matter how slow the frames are.

People seem to be taking this for granted, but if you think about it for a second, this behavior is really strange and bizarre:

- Running the emulator at a lower framerate consumes less computational resources, yet leads to more latency.

- Running the emulator at a higher framerate consumes more computational resources, yet leads to less latency – until you reach the max speed your hardware can handle.

This is a great way to “blow up” some of the possible explanations proposed for input latency in the past. If your system is able to tolerate running the emulator at a frame rate that is even a little faster than usual – yet this net increase in computational resources somehow brings total latency down – then that says something profound about what is and isn’t really causing the latency.

I have some more thoughts in this regard, but I’m curious what thoughts other people have about this, as this is really surprising to me.

Also, I hadn’t seen the beamracing post above before making mine - very cool.

@mdrejhon: so if I understand correctly, rather than presenting the framebuffer all at once, you separate it into chunks that you present in a series of smaller flushes, right?

I’m trying to understand how this doesn’t lead to tearing. If we have 10 frame slices, is the thinking that the real raster is then updated 600 times per second total? It is clear that there will be intermediate states where the raster will be half-displaying the past frame and half-displaying the current one, but is the thinking that since we’re changing things so fast, that assuming no jitter, this will not perceptually manifest as tearing?

It’s pretty simple for me to see how this would reduce total latency by something like ~0.5 frame: the top scanline no longer has to wait until the entire buffer is finished to present (~1 frame difference), whereas the bottom scanline is the same (~0 frame difference), so on average you get a 0.5 frame difference.

My second thought is that you would get a further reduction if some main part of the framebuffer updating routine is currently done synchronously (is it?). This would mean that all sorts of stuff is blocked until a complete framebuffer is filled (like polling the input). Having 10x more partial framebuffer calls would let you do things like poll the input 10x per frame rather than just 1x, which would give you, more or less, another half a frame of latency reduction. Coupled with the above, you get a total latency reduction of roughly 1 frame less than doing the entire framebuffer synchronously. Is that the thinking?

@mdrejhon I think @Dwedit would be the best person to talk to about extending the libretro API for this purpose given his excellent past track record. Hopefully you guys can get some initial implementation going that way. That is assuming @Dwedit is interested.

Essentially, yes. The rest of the screen remains unchanged.

There are many ways to do this:

- Full screen blitting (Present() full screen of incompletely-rendered emulator framebuffer)

- Partial blitting (Present() ing only a rectangle)

- Front buffer (Writing new emulator scanlines directly to front buffer)

The same principles of beam racing jitter margin applies to all the above. All of them can be made to behave identically (With different GPU bandwidth-waste considerations) at the end of the day.

In all situations, the already-rendered (top portion) of emulator frame buffer is left unchanged and kept in the onscreen buffer. That, in itself, prevents tearing. Duplicate frames don’t show tearing. Likewise, duplicate frameslices don’t show tearing either. Viola!

The incompleteness never hits the graphics output, as long as new emulator scanlines are appended to the framebuffer before the GPU reads the pixel row out to the graphics output.

It’s already a working & proven technique already implemented in 2 other emulators including WinUAE. Please read the WinUAE thread from Page 8 through to the end.

No tearing. Duplicate frames = no tearing. Duplicate frameslices = no tearing. If the screen is unchanged where the real-raster is, there’s no tearing.

And the jitter-margin is very healthy. If there’s a lot of random variances in the beam-race distance between emulator-raster and real-raster, there is no tearing, as long as it’s all within the jitter margin (which can be almost a full refresh cycle long, with a few additional tricks!) In practicality, variances and latency can be easily brought to sub-millisecond on modern systems (>1000 frameslices per second).

Although I risk shameless self promoting when I say this – being the founder of Blur Busters & inventor of TestUFO and have co-authored peer reviewed conference papers – I have a lot of practical skills when it comes to rasters. I programmed split screens and sprite multiplication in 6502 assembly language in SuperMon64, so I’m familiar with raster programming myself, too.

There’s no tearing because the screen is unchanged where the realraster is.

It’s already implemented & proven.

Also, there is no “perceptually” – tearing electronically doesn’t happen because it’s unchanged bits & bytes at the real-world raster. It as if Present() never happened at that area of the screen.

Download WinUAE and see for yourself that there is no tearing.

Also, I posted an older BlurBusters article to hype it up a bit (ha!). Since then I’ve collaborated with quite a few emu authors. Shoutout to Tony Wilen, Calamity, Tommy and Unwinder (new RTSS raster-based framerate capping feature using “racing the VBI to do a tearingless VSYNC OFF” – not an emulator, but inspired by our research).

Depends on the VSYNC ON implementations – you can save 2 frames of latency, and also depends on parameters like NVInspector’s “Max Prerendered frames”.

But not only that, the randomness of the input lag savings disappear (e.g. granularity errors of input reads caused by enforced conversion of midscreen input reads to beginning-of-screen input reads, especially with surge execution techniques). Say, you’ve got a flight simulator game that does input reads at random positions throughout the emulator’s screen. Many emulator lag-reducing techniques will round-off those reads to refresh cycle granularities out of necessity. Etc. So you got linearity distortions, too.

Emulators already do inputreads on the fly so the number of inputreads does not increase or decrease. They already rasterplot into an offscreen frame buffer, because many emulators need to support retro “racing the beam” tricks. What we’re simply doing is beam-racing the visibility of that already existing offscreen frame buffer. In the past, you waited to build up the whole offscreen emulator framebuffer (beamracing in the dark) before displaying it all at once. With the tricks, you can now beamrace the visibility of that existing offscreen progressively-more-complete emulator framebuffer. Basically scanning out more realtime.

In reality with good frameslice beamracing, and execution synchronization (pace emulator CPU cycle exact or scanline exact), the latency between input read and the photon visibility can become practically perfectly constant and consistent (not granularized by the frameslices) with only an exact latency of the decided beamrace margin. (In reality, your input device is limited by the poll rate, e.g. 1000Hz mouse, so that’s the practical new limiting factor, but that is another topic altogether)

Since the granularity is hidden by the jitter margin, if the realraster and emuraster are chasing at an exact distance apart (e.g. 1ms) despite the frameslicing occuring (like brick laying new bricks on a path, while a person behind is walking at constant speed – adding new sections to a road granularity while the cars behind moves at a constant speed – same “relativity” basis. As long as you don’t go too fast, you don’t run into the incomplete section of the road (or on the screen: tearing), but the realraster and emuraster is always moving at a constant speed, despite the “intervalness” of the frameslicing.

You simply adjust the beamracing margin (e.g. chase margin) to make sure the new frameslices are being added on time before the constant-moving realraster hits the top edge of a not-yet-delivered frameslice (that’s when you get artifacts or tearing problems). Even when presenting frameslices, the GPU still outputs one scanline at a time out of the graphics output. So the frameslices are essentially being “appended” to the GPU’s internal graphics output buffer ahead of the current constant-rate (at horizontal scanrate) readout of pixels by the RAMDAC (analog) or digital transceiver (digital). Sometimes they’re bunched into 2 or 4 scanlines (DisplayPort micropackets). But other than that, there’s no relationship between frameslice height and graphics output – the display doesn’t know what a frameslice is. The frameslice is only relevant to the GPU memory. The realraster and emuraster continues at constant speed, and the frameslice boundaries jump granularity but always in between the two (realraster and emuraster, screen-height-wise), and tearlines never become visible. As long as it’s within the jitter margin technique, there can safely be variances – it doesn’t have to be perfectly synchronous. The margin between emuraster and realraster can vary.

You simply just have to keep appending newly rendered framebuffer data (emulator scanlines) to the “scanning-out onscreen framebuffer” before the pixel row readout (real raster) begins transmitting the pixel rows. Metaphorically, this “onscreen framebuffer” is not actually already onscreen. The front buffer is simply a buffer directly at the graphics output that’s got a transceiver still reading it one scanline at a time (at exact interval, without caring about frame slice sizes or frame slice boundaries), serializing the data to the graphics output. All of the beamracing techniques discussed above are simply various methods of appending new data to the front buffer before the pixel row begins to be transmitted out of the graphics output. It’s just the nature of serializing a 2D image into a 1D graphics cable, and it works the same way from a 1930s analog TV broadcast through a 2020s DisplayPort cable. That’s why frameslice beam racing works on the entire 100 year span without tearing.

Properly implemented frameslice beam racing is actually more forgiving than things like Adaptive VSYNC (e.g. trying to Present() in the VBI between refresh cycles to try to do a tearingless VSYNC OFF), since the jitter margin is much bigger than the height of the VBI. So tearing is less likely to happen! And if when tearing happens, it only briefly appears then disappears as the beamracing catches up into the proper jitter margin.

The synchronousness of input reads is unchanged, but the synchronousness of input-reads-to-photons becomes much closer to the original machine. Beam racing replicates the original machine’s latency. That’s what makes it so preservationist friendly and more fair (especially when head-to-heading an emulator with a real machine). So before a real-machine arcade contest, one can train in an emulator configured to replace original machine latencies via frameslice beam racing.

Some emulators will surge-execute a framesliceful, but that’s not essential. If you wanted, you can simply execute emulator code in cycle exact realtime (like an original machine) and the raster line callback will “decide” when it’s time to deliver a new frameslice on a leading “emu-raster percentage ahead of real-raster” basis (or simply use a 2 frameslice beam race margin) The emuraster and realraster always moves at constant scan-rate in this case (emuraster at emu scanrate, realraster at realworld scanrate). With the frameslice boundaries jumping forward in granular steps, but always confined between the emuraster and realraster. Tearing never electronically reaches the graphics output, tearing doesn’t exist. (Because the previous frameslice (that the realraster is currently single-scanline-stepping through) is still onscreen unchanged).

RunAhead is superior in reducing lag in many ways, but beam racing (sync between emuraster & realraster) is the only reliable way to duplicate an original machine’s exact latency mechanics. As a bonus, it is less demanding (At low frameslice counts) on the machine. All original times are preserved between realworld/emulator inputread versus the pixels being turned into photons – even for mid-screen input reads on the original machine – and even for certain games that have jittery-on-original-machine input reads that sometimes read right before/after original machine’s VBI – which causes a 1/60sec (16.7ms) randomly varying latency-jumping jitter on emulators doing surge-execution techniques since some input reads miss the VBI and gets delayed – the beamracing avoids such a situation).

Whatever distortions to input reads is occuring by all kinds of emulator lag-reducing techniques, all of that disappear with beam racing and you only get a fixed latency offset representing your beamracing margin (which can become really tight, sub-millisecond relative to original machine when both orig machine & emulator is connected to the same display) – with high-frameslice rate or front buffer rendering. Yet still scale all the way down to simpler machines – still remain sub-frame latency even on Android/Raspberry PI 4-frameslice coarse beamracing by using a low frameslice count and a wider (but still sub-frame) beam racing margin.

So while RunAhead is superior in many ways, and can reliably get less latency than the original machine – frameslice beamracing is much more preservationist-friendly, replicates original machine latency for all possible inputread timings (including mid-screen and jittery input reads).

P.S. If you download WinUAE, turn on the WinUAE tinted-frameslice feature (for debugging/analysis), and you’ll see the tearing (jitter margin debugging technique). It’s fun to watch and a very educational learning experience. Turn it off, and the tearing is not visible – horizontal scrolling platforms look like perfect VSYNC ON.

EDIT: Expanded my post

@mdrejhon: OK, thanks - I’ll read in more detail to write a more detailed response, but as I quickly look at the WinUAE thread, I have a question about this:

Yes, beam racing improves framebuffer lag by sometimes almost an order of magnitude – 40ms worst case to sub-5ms is directly in the territory of full order of magnitude. And can still go closer to near 0ms! That’s literally a jawdropper and totally felt in pinball gaming.

Based on what you wrote above, it looks like rendering delay would be reduced by something like 0.5-1 frames (8-16 ms) using this technique. But going from 40ms to 5ms corresponds to decreasing by roughly 2 frames. How does splitting the frame into slices lead to multi-frame reduction?

One other question: let’s say the real raster has just finished the last frameslice. When the real raster restarts again from the top, does it erase the entire screen and begin again, progressively filling in the new frame (with the bottom unfinished parts being completely erased to black)? Or does it only erase the top bits and start progressively overwriting those, leaving the remnants of the old frame unaltered at the bottom?

Don’t forget buffer backpressure mechanics and the frame queue concepts (two semi-independent extra lag factors). I explained that – depending on circumstances – VSYNC ON can frequently lead to more than 1 frame of input lag. Not all VSYNC ON are identical, between all applications/drivers/programming techniques – in many graphics drivers, it is often a shallow frame queue (Because that’s more stutterless than a traditional double buffer technique). There are indeed ways to force it to behave like a double buffer, but when running fully flat-out, you can end up have 2 more frames of lag unless you do special techniques.

In addition, consider varying-lag distortion effects. I edited the above post to make it bigger to cover some of the concepts how it’s not always an exact “X frame of lag” – one input read may receive 33ms lag and the next input read may receive 16ms of input lag, because of lag-granularity caused by varying timing of original machine’s input read, and how it interacts with the boundaries of the surge-execution intervals of various lag-reducing techniques – how the emulator lag-distorts the input read (or not). Most retro games reads input at a consistent time, but that timing can jitter on the original machine, and it might jitter across the surge-execution intervals, creating a lag granularity effect that did not exist on the original machine. What this can potentially mean is that button-mashing may feel erratic in the emulator and consistent on original – for a specific retro game (that had varying-in-refresh-cycle input reads). There are pros/cons of all kinds of various input-lag-reduction methods.

Good emulators with optimization may have only 1 frame of input lag (next frame response) but not all of them. Yet none (except beam raced frameslicing) can do same-frame response, e.g. midscreen input reads changing screen content of the bottom of the same dislay refresh cycle (no need to wait till next refresh). And none can do guaranteed subframe fixed latency offset to input reads (emulators that try for sub-frame latencies often subject them to refresh-cycle-rounding-off effects, caused by surge-execution distortions). Beamraced emulation (render and scanout on the fly) are more able to guarantee consistent lag for all possible input read timings throughout any part of any emulator refresh cycle, relative to the real-world refresh cycle.

Obviously, RunAhead is superior for many things while beamracing is another good tool for faithful replication of original machine lag while reducing system requirements (during low frameslice rates)

Full answer will require a multi-page reply to explain things. If you want, we can move to the Area51 forum of the Blur Busters Forums to discuss this part further.

I’ll give a partial answer to help conceptually.

Remember, cable scanout is sometimes totally different from panel scanout. There’s no concept such as “clearing the screen” on the monitor side. So forget about it, the emulator can’t do anything about it. (In reality, impulse display will automatically clear and sample-and-hold LCD displays will hold until next refresh cycle – but the considerations are exactly identical regardless whether you connect an original machine to it, or an emulator to it. So, thusly, this discussion is irrelevant here. Stop guessing displayside mechanics for now, we’re only comparing emulator-vs-original connected to the SAME display. Whether it be same CRT or same LCD.

We just want the cable to behave identically where possible. (internal builtin displays also serialize “ala cable-like” too, phones, tablets, and laptops sequential scan too).

Focus on cable scan POV, and ignore display scan POV. So let’s focus on cable scan-out – and the GPU act of reading one pixel row at a time from its front buffer into the output at exact horizontal scanrate intervals).

Also, on the GPU side, Best Practice #9 recommends against clearing the front buffer between emulator refresh cycles, in order to keep the jitter margin huge (wraparound style).

If you’re an oldtimer – Another metaphor (if it is easier to understand frameslice beamracing) is an old reel-to-reel video tape that runs through a record head and a playback head simultaneously.

The Tape Delay Loop Metaphor Might Help

Technically, nothing stops an engineer from putting two heads side by side feeding a tape through both – to record and then playback simultaneously – that’s what an old “analog tape delay loop” is – a record head and a playback head running simultaneously on a tape loop.

Metaphorically, the tape delay loop represents one refresh cycle in our situation. In our beamracing case, the metaphorical “record head” is the delivery of new scanlines (even if it’s surged frameslicefuls at a time) to the front buffer, ahead of the “playback head”, the one-scanline-at-a-time readout of the front buffer into the graphics output (at exact horizontal scanrate intervals).

The front buffer isn’t onscreen instantly, it’s still being readout one pixel row at a time into the graphics output at exact constant rate (horizontal scanrate), so you always can keep changing the undelivered portions of the front buffer (including undelivered portions of a frameslice), ad-infinitum, as long as your real raster (the pixel row readout to output) stays ahead of the emu raster (new frame buffer data being put into the front buffer one way or another). This is a great way to understand why we have a full loop of a wraparound jitter margin (full refresh cycle minus one frameslice worth).

Decreasing input lag is by putting the playback head as close as possible to the record head. That’s tightening the metaphorical beam race margin.

- The jitter margin is the tape between the playback head and the record head.

- The race margin is the tape between the record head and the playback head.

So, a new looped safety jitter margin of one full refresh cycle minus one frameslice.

The entire tape loop represents one refresh cycle, looping around. So for 1080p, you can have a >900-scanline jitter margin with zero tearing, if you use the wraparound-refresh-cycle technique as described above in step 9 of Best Practices. Ideally you want to race with tight latency, though. If you do a “2 frameslice bea, race margin”, that means with 10-frameslice per refresh cycle, you have gotten a 1-frameslice verboten region (tearing risk), 8-frameslice race-too-fast safety margin, and 1-frameslice race-too-slow safety margin – before tearing appears. That’s 15ms of random beam race error you can get with zero tearing!!

In our case, metaphorically, frameslice beam racing is simply the record head surging batches of multiples scanlines onto the metaphorical tape loop. (e.g. a movable record head that intermittently records faster than the playback head). The playback head’s playback speed is totally merrily unchanged!! (i.e. the pixel row readout from front buffer to GPU’s output jack). As long as the record head never falls behind and collides with the playback head (aka tearing artifact) – thankfully this is just a metaphor, and tearlines won’t wreck the metaphorical tape mechanicals and tape loop permanently (ha!) – beam racing can recover during the next refresh cycle (aka only a 1-refresh-cycle appearance of tearing artifact). Metaphorically, front buffer rendering (adding one scanline at a time) means the record head doesn’t have to surge ahead (it can record at the same velocity as the playback head).

You can adjust the race margin to somewhere far enough back that your margin is never breached. That is the metaphorical equivalent of the distance between the tape record head (adding new emu lines to front buffer) and the tape playback head (GPU output jack beginning transmission of 1 pixel row at a time)

That’s why it’s so forgiving if properly programmed, and thus can be made feasible on 8-year-old GPUs, Android GPUs, and Raspberry PI GPUs, especially at lower frameslice counts on lower-resolution framebuffers (which emulators often are), so we’ve found innovative techniques that surprised us why it hasn’t been used before now – it’s conceptually hard for someone to grasp until they go “Aha!”. (like via the user-friendly Blur Busters diagrams, etc).

I can conceptualize this visually in a totally different way if you were not born in the era of analog tape loop, but this should help (in a way) to conceptualize that we’ve successfully achieved a 900+ scanline safety jitter margin for 1080p beam racing, even with wraparound (e.g. Present()ing bottom half while we’re already scanning top half, and Present()ing top half while we’re already scanning bottom half – both situations have NO tearing, because of the way we’ve cleverly done this, with Best Practice #9 two posts ago…) – making it super-forgiving and much more usable on slower-performing systems. Smartphone GPUs can easily do 240 duplicate-frames a second, it’s only extra memory bandwidth to append new frameslices, anyway.

So that is how a 900+ scanline fully-looped across-refresh-cycle wraparound jitter margin is achieved with 1080p frameslice beamracing. At 60Hz, this means up to ~15ms range of beam race synchronization error before tearing appears! This helps soak up peformance imperfections very well during transient beamrace out-of-sync, e.g. background software. And the beamace margin can also be a configurable value, as a tradeoff between latency and tearline-apparance-during-duress-situations.

Modern systems can easily do submillisecond race margins flawlessly, while Android/PI might need a 4ms race margin - still subframe latency!

Yes, in the extreme case frameslices can become one pixel row with no jitter margin (like how my Kefrens Bars demo turns a GeForce 1080 into a lowly Atari TIA with raster-realtime big-pixels at nearly 10,000 tearlines per second) but emulators like the jittermargin technique that hides tearing by simply keeping graphics unchanged at realraster and keeping emuraster ahead of realraster. (Like the tape delay loop metaphor explained above).

This is my partial answer. I have to go back to work, but hopefully this helps you understand better…

Thank you! Very helpful! I admit that some of the terminology is fairly new for me, so I spent a good amount of time searching and found a glossary you posted here that was very useful in getting in sync with some of this stuff.

I also liked the the tape loop metaphor that you used - rather appropriate, since my background is primarily in audio digital signal processing (with some occasional image processing thrown in). Video processing is a little different, but so far seems straightforward enough, given the way I’m used to looking at things. Many of the terms you use, and some of the other terms I see thrown around in this discussion, are things that I generally recognize from general DSP jargon. Some others are quite new entirely, so I hope I can quickly get to understanding those terms correctly.

With respect to cable scanout vs panel scanout, after looking at your glossary, I think you’re right that panel scanout is irrelevant to the discussion and cable scanout is what really matters here. Likewise, I’m also less interested in things like USB poll interval lag (and variance). The user will be able to supply their own TV and controller, which will hopefully work well enough. What I’m most interested in is getting my head wrapped around the middle, where there are huge chunks of latency that could, hopefully, be reduced using a method like this. Beyond that, the user can tweak their TV/controller if need be, independently from this - play around with USB poll intervals, or lower the TV’s resolution if need be, or just generally find settings that work.

I am really quite surprised to see that VSync can cause multiple frames of input lag. I thought that VSync synchronizes the monitor playback rate with the GPU framerate, so that there is no jitter and hence no tearing. What I don’t get is, when people use the “Frame advance” feature, where they push a button on the controller and then manually advance frames only to see it register 2-4 frames later – is VSync somehow doing that? Would beamracing be able to help lower latency even in that scenario?

I think I’ll start there for now. Your posts are very detailed and I rather appreciate that - I’ll probably need to read a few times before I get it all. For now I’m most interested in understanding the basic signal path and the major components driving latency which come from things like video processing, rather than input polling and such.

No, you can’t measure the vsync lag with that method.

What it shows you is the game inherent lag to react and the way input is polled by the emulator.

Vsync adds lag on top of that. You can reduce it with methods such “Hard GPU Sync” in RA or “Maximum Pre-Rendered Frames” in Nvidia Panel.

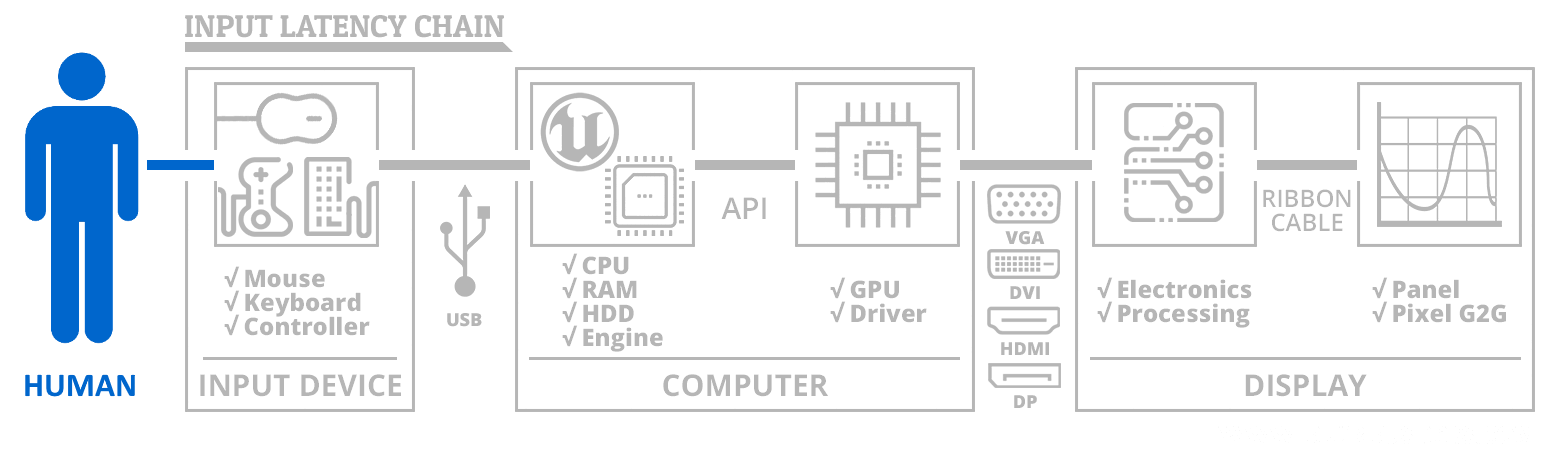

You’re also missing on what can be the worst source of lag: the screen inherent lag before it starts displaying an image.

The input lag chain is a very complex topic.

To simplify, lets focus only on emulator thru graphics output.

Traditionally, an unoptimized emulator on unoptimized drivers can:

Consider whether emulator does preemptive real input reads before renderin emulator frame, or does inout reads in realtime while doing the render (simulated raster scanout)

- Render emulator frame (varies, up to 1/60sec lag, depending on how intensive it is, and if this jiffy is executed or not to speed up individual emulator rendered frame for delivery sooner. Input read can be early in emulator frame, versus late in emulator frame)

- Deliver to graphics card (varies, up to 1 frame lag) - Present() blocks until room in frame queue. Thats buffer backpressure lag!

- Any frame queues used in the graphics card (varies, 0, or 1 or 2 frame lag). Graphics drivers delivers through any prerendered frames in sequence as consecutive individual refresh cycles.

You can make it efficient and tight (1 frame lag) but in reality, can be awful. Some very old Blur Busters input lag tests of Battlefield 4 had over 60ms input lag even on a display that had less than 10ms input lag, and even when a frame rendered in only 15ms. Conversely, CS:GO reliably achieved approximately 20ms or so. That was tests way back almost five years ago. The huge variances between software even for VSYNC ON, OFF, GSYNC… Software plays a role on how much lag they add and how they treat the sync workflows (that’s why you hear various tricks such as “input delaying” to reduce lag closer to output.)

Usually maximum prerendered frames is 1, and that is necessary for compatibility with lots of things such as SLI which must multiplex frames from multiple cards into the same frame queue. It also massively improves frame pacing. A few years ago, there was a controversy with the disappearance of the “0” setting in NVInspector for Max Prerendered Frames.

You can use tricks to reduce a lot of lag in this lag chain, but VSYNC ON in games can vary humongously between different apps, it’s simply time interval of input read (which necessarily occurs before rendering in most 3D games) versus the pixel hitting the output jack (the point A to B we are limiting scope to for simplicity).

Beam raced frameslices does input read, render AND output in essentially realtime. Just like the original machine did. Faithfully. With it, there can be just be a mere 1 millisecond between an input read and the actual reacted pixels hitting the graphics output. For any game that does continual input reads mid-scanout, the photons of that can actually hit your eyes in subframe time. Like a mid-screen input read for bottom-of-screen pinball flippers.

Beam raced frameslices does input read, render AND output in essentially realtime. Just like the original machine did. Faithfully. With it, there can be just be a mere 1 millisecond between an input read and the actual reacted pixels hitting the graphics output. For any game that does continual input reads mid-scanout, the photons of that can actually hit your eyes in subframe time. Like a mid-screen input read for bottom-of-screen pinball flippers.

You still need to change a lot in every single emulator so it render the slices and even poll more often than 1/refresh rate, and is that even accurate? I’m pretty sure it’s not for every single case.

I’ll respond more to @mdrejhon in a sec, but as a quick response to the above, how much of this would be with the individual cores and how much would be within the libretro API?

@mdrejhon: thanks for that. As a rough pass I think I get the idea of why VSYNC can sometimes lead to multi-frame delay. I do think I’m continuing to get bogged down a little bit w/ the terminology here though.

Right now, the way I think of input timing latency is as follows:

- The input is a delta function, and we are trying to figure out the total latency (or group delay) in the “impulse response.”

- The signal path consists of a string of delay lines, one after the other, each of which adds some time delay to the signal.

- The total time delay is the sum of the time delays of each component.

- Rather than all of the delays being set in stone, the delay of each component is a random variable according to some probability distribution. We know the range of values each can take, the probability of each, and the mean and variance. For instance, a 100 Hz USB poll interval is a uniform distribution on 0-10ms, which has a mean of 5ms and a stdev of ~3ms.

- The components are “approximately independent” of one another, at least given reasonable running conditions. Meaning the USB poll interval position does not correlate with, for instance, the refresh interval position, or whatever. Both are equally random, or if there is any correlation, it’s negligible.

- Because of #5, the expected value of the total latency is the sum of the expected value of the latency of each component.

#5 is probably where the gray area is. Some components might correlate with one another… sometimes… only under certain fundamental conditions… and it’s hard to tell where. That seems to be the basic problem.

If we have two components that tend to correlate significantly, so that a better latency on one suggests a higher or lower probability of a better latency on the other, then we can chunk them into a single component. Ultimately we can always arrive at some chunks of components that do not correlate with one another in any signficant way, given at least reasonably normal working conditions.

Let’s just start with that, if that makes sense.

Actually, for at least one module, it’s easier than you think.

WinUAE told me it was a quick modification to add basic 60 Hz support.

This is because for “raster accurate” emulation modules (e.g. Nintendo and Super Nintendo emulation):

- The emulator module is already plotting one scan line at a time into an offscreen buffer. It’s already happening with the NES module.

- The emulator module is already (usually) doing real time input reads while plotting scan lines. It’s already happening with the NES module.

- The new raster poll API simply lets the centralized beamracer to “peek” at the ALREADY EXISTING module’s ALREADY EXISTING OFFSCREEN FRAMEBUFFER, and grab a frameslice from it.

The centralized code will do the peeking, and the centralized code will do the grabbing of the frameslice itself. The raster poll is simply giving the central code opportunities to do early-peeks of the emulator’s existing offscreen framebuffer, every time a new emulator scan line is written to it.

For at least one of the easiest Retroarch modules, it looks like only a 10 line modification.

All the complexity is centralized (probably ~1000 lines of code, 3-4 days of programming work). Please re-read my proposal. That’s where the RetroArch work ahead is cut.

More difficult cores will take a lot more time, but once the core libretro is made beamrace compatible, then the beamrace support can be added to only one module at a time. And from what I looked, the easiest module will only need a simple hook (10 lines) to turn it into a successful beamracer.

Emulator authors – over the last two decades – have done an amazing job refining realtime beamracing on the emulator offscreen buffer already. So it’s not much work left to glue the remaining step. So to the authors of the “easy” modules, thank you so much for making it so easy to beamrace for real!

It’s the last piece of puzzle that most emulators programmers do not understand; the “black box” between Present() and photons – but people like me do. That 1% is complex to understand and this is why I am writing big posts to explain that 1% needed to finish the “full beamrace chain”.

And, even if it’s easier than expected with some modules (NES)…

…It will also be more difficult than expected with other modules (who knows which ones). It depends on how much of the beamracing chain they’ve already completed.

The fact is that both extremes exist.

The beauty is that once it’s implemented in libretro, it can be implemented one module at a time, one by one, beginning with the easiest modules – taking our merry time.

Once the easy module is done, it gives everyone the “aha” moment, and makes some people understand frameslice beam racing much better. (The remaining 1% step needed to finally pull an emulator’s existing internal beamracing out to the real world display).

For some modules, >99%+ of the beamracing work is already done. 20 years of beamracing development has done that already, but never beyond the Present() API.]

The major complexity will be making libretro compatible. If there’s a lot of layering (e.g. lack of a VSYNC OFF mode, and a lot of black box layers, it has to be refactored somewhat). Basically, VSYNC OFF support needs to be added to LibRetro, in order for frameslice beamracing to work. It might or might not be royal headache.

But on the NES module side (at least), it’s quite minor changes there for that particular module since it already does internal beamraced input reads and internal beamraced line-plots into its internal framebuffer.

For time split between “The core, and the easiest module” – I guesstimate over 95% of programming time will be focussed on the centralized code, and 5% of the time spent on the easiest module. Once done, the bridges can be crossed for remainder of modules.

The hardest module might need lots of code – and/or rewriting – to be compatible, but the easiest modules will essentially only need 10 lines of modifications.

The already cycle-exact and raster-exact modules will obviously be the easiest modules, especially if they’re simply (as the NES module is) already rasterplotting one line at a time internally already to an internal frame buffer. Those types will be easy to do frameslice beamracing.

The emulator modules don’t even need to know what the heck a frameslice is, if one re-reads my proposal.

In my proposal, all the emulator module is doing is letting the core code (centrallized raster poll code) to do early-peeks to the existing offscreen beamraced buffer that most 8-bit and 16-bit emulators already do, in order to be compatible with retro-era raster interrupts.

I’m going to cover this from the opposite side first.

This is an easier argument for me to make, because there is fewer variables. Makes latency math simpler.

I’ve got a 1000fps high speed camera. With a test program, I’ve successfully got API to photons in just 3 milliseconds on my fastest LCD display. That is Present() to photons hitting the camera sensor. That includes LCD GtG. That includes DVI/DisplayPort latency. That includes monitor processing latency. I’m able to get this for top edge, center, and bottom edge of screen.

I can likely probably get <1ms with a CRT and an older graphics card with a direct adaptorless VGA output.

But let’s simplify. So, now we already know the baseline absolute-best proof from my high speed camera, and it is proven “realtime” API to photons, by all practical raster extent.

I’ve also done brief tests that showed 4ms-5ms from mouse click to photons, for some extreme blank-screen VSYNC OFF tests. Now, we know that DisplayLag.com can have a display latency difference from top/center/bottom (e.g. 2ms, 9ms, 17ms). Obviously for simplicity, most sites only report average latency (VBI to screen middle). Which is often half a refresh cycle. Which is why you never see numbers less than 8.3ms on sites such as DisplayLag.com for a 60Hz display (1/2 of 1/60sec = 8.33333). That’s simply a stopwatch from VBI-to-raster. With beamracing, the lag is vertically uniform (e.g. 2ms, 2ms, 2ms TOP/CENTER/BOTTOM) for Present-to-Photons during VSYNC OFF frameslice beamracing. (There’s micro lag gradients within frameslices – caused by the granularity of frameslice versus the one pixel row at a time scanout of graphics output – but that can be filtered by the tape loop metaphor for consistent unvarying subframe emulator pixel to photons time, as a fixed screen height difference between emu raster and real raster – all easily centralizable inside the central code of the raster poll API, it’s just simply busysleep on RTDSC or QueryPerformanceCounter)

Briefly going offtopic, but currently the least-laggy LCDs via DisplayPort/DVI tends to be ~3ms for API-to-photons if we're focussing on minimum lag (top lag, or beamraced pixel-for-pixel lag) -- subtract about 8.3ms from the number you see on DisplayLag.com and that's your beamraced input lag attainable. Certainly there are less laggy displays and more laggy displays, but some LCDs are almost as fast as CRTs in response (e.g. just digital transceiver lag & GtG lag, with a few scanline buffered micropacket lag). Although we're not worried about panel scanout, the fact is some of them have realtime synchronouz cable-to-panel scanout abilities (also see www.blurbusters.com/lightboost/video for an older example high speed video of how an LCD scans out -- and how some blur-reduction strobe backlights work (LightBoost, ULMB). In non-strobed operation, it's essentially a fast-moving a GtG fade zone chasing behind the currently-being-refreshed pixel rows, being refreshed practically on the fly directly from the cable (with only line-buffer processing for overdrive -- unlike old LCDs that often full framebuffered the refresh cycle first).So all we’re worried about is increases to latency to this absolute-best baseline.

My graphics card can do up to 8000 frameslices per second (Kefrens Bars demo).

Mouse poll 1000Hz adds an average of 0.5ms latency (the midpiont average of 0ms…1ms latency). There are some 2000Hz mice and overclocked 8000Hz mice experimentation being done, so it’s possible to theoretically get lower – USB of 0.125ms latency has been successfully achieved with mouse overclocking.

Emulator frameslice granularity at 2400 frameslices per second (40 frameslices per refresh cycle, HLSL filters disabled, GTX 1080 Ti extreme case) with a 1.5 frameslice average beamrace margin = 1.5/2400sec latency = 0.6ms latency.

So mouse poll latency 0.5ms and beamrace margin latency 0.6ms = 1.1ms lag for input-read-to-pixel-transmitting-on-wire. It could be even less obviously given my computer’s performance. But this is already incredibly small.

While doable on i7’s with powerful GPUs, it is overkill for many. A lot are happy with 10-frameslice beamracing (600 frameslices per second). 2 frameslice lag equals 2/600sec or 1/300sec or 3.333ms for the common 10 frameslice WinUAE setting (I’m excluding the 0.5ms from 1000Hz USB input poll, obvoiusly).

It continues to scale down to more leninet margins, like 4-frameslice or 6-frameslice beamracing on slower platforms (e.g. PI, Android, etc). There’s more lag for that, but still subframe lag compared to any other possible non-RunAhead lag reduction approaches. 4 frameslices with 1.5 slice beamrace margin is still only ~6ms lag – incrediblly low for a Raspberry PI, and I suspect 10 frameslices are doable on the newer mobile GPUs. At 600 frameslices per second, that gets real close to more exactly reproducing faithful-original latencies.