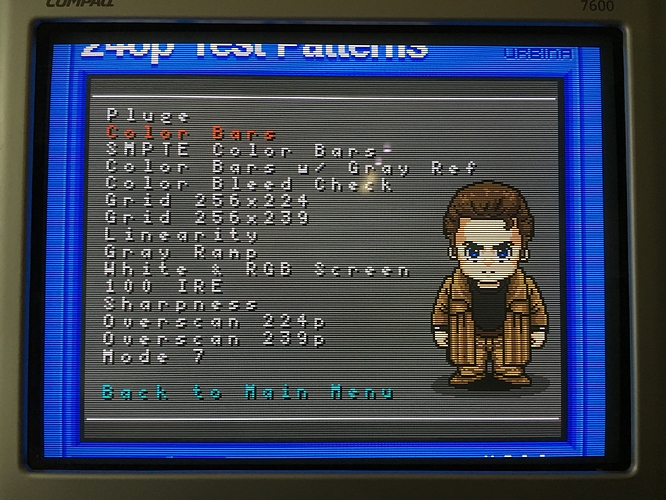

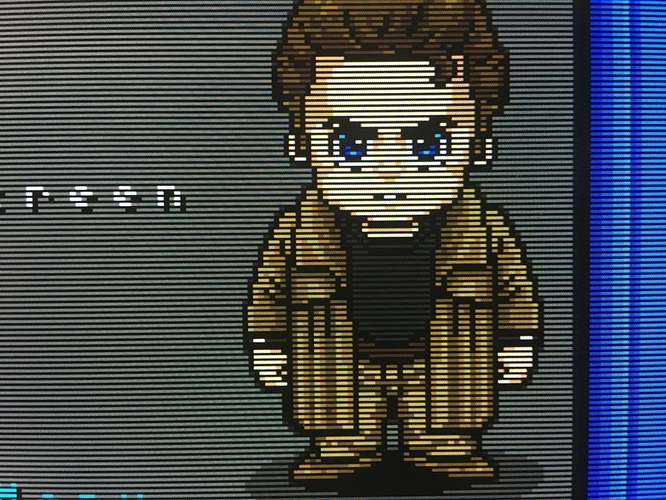

scored a decent PC CRT! Picture looks good with brightness/contrast at default setting, and I’m pretty sure it was used only rarely, as the previous owner was in a nursing home. It’s a 17” Compaq 7600, “flat” screen, but you can clearly see the classic CRT curvature underneath the glass surface. (or is that pincushion distortion? Hard to tell). Manufacture date is 2007(!), so it’s among the last CRTs ever produced.

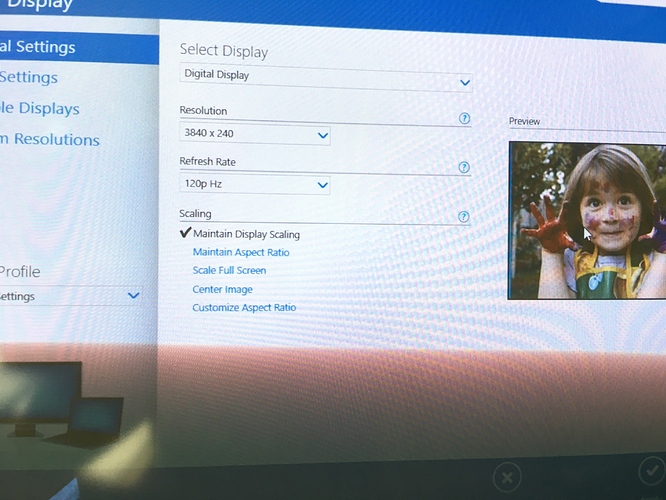

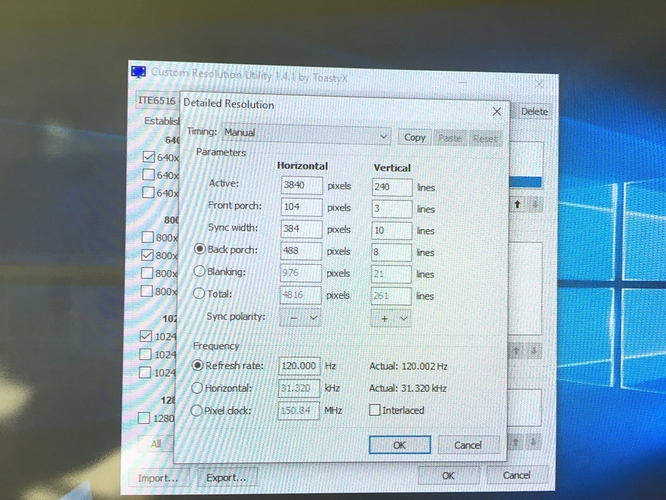

It’s been so long since I’ve done this that I’ve forgotten how to set up 240p with Retroarch. Using Custom Resolution Utility I’ve created a 3840x240 @ 120Hz resolution. With black frame insertion this should be the same as 60Hz.

First, what changes do I need to make to the RA config to make the monitor switch resolutions to 3840x240 @ 120 Hz when RA is launched?

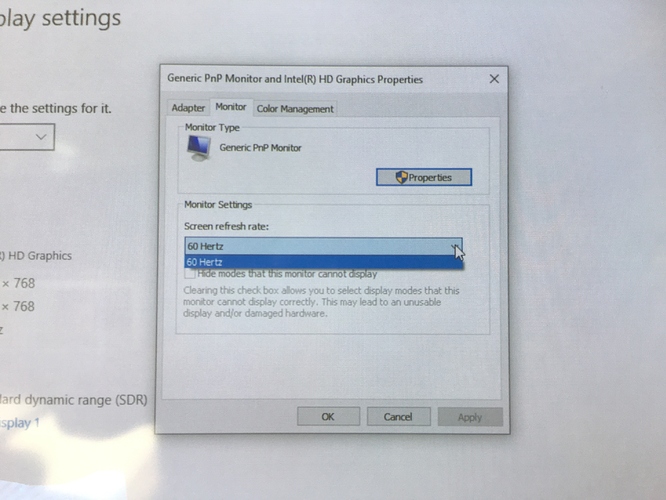

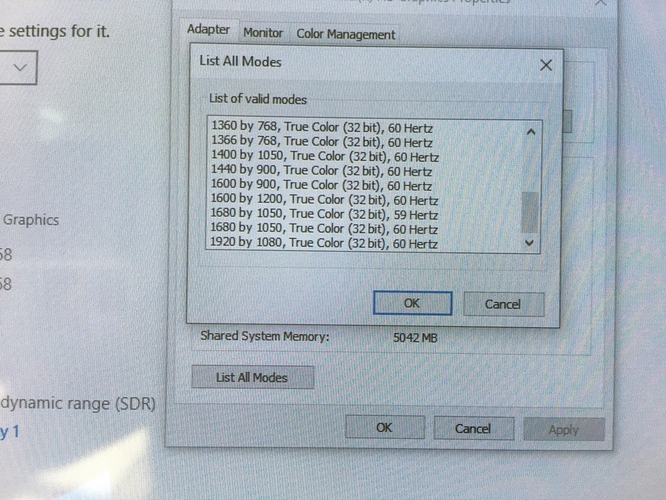

Has anyone had any success doing this with Intel integrated graphics? Intel Integrated graphics is giving me some major headaches. The drivers appear to be broken; I can create a custom resolution within the Intel control panel, and when I go to “remove custom resolution,” it lists the custom resolution, so it’s clearly adding something. However, when I open the Intel Graphics display driver properties and click “list modes,” the custom resolution isn’t listed. In fact, only 60 Hz resolutions are listed, and it doesn’t even list common vga resolutions like 640x480. I’m using Windows 10 and reinstalled the latest drivers. Any ideas? I want my 240p!

I really don’t know what any of this stuff means and I don’t want to break anything.

I really don’t know what any of this stuff means and I don’t want to break anything. Looks like this CRT is going in the closet until I can get some better hardware.

Looks like this CRT is going in the closet until I can get some better hardware.