So yeah, those black bars - does patching to NTSC remove those? It really seems like that’s what’s killing my setup for these games. It’s just weird 'cause it’s only the PSX PAL games, and not PAL on any other system (that I’ve seen, anyway), that causes the issue.

As for the point of using the shader - I think I left out some context. Particularly that I’m talking about internal resolution - and which displays I’m using. It kinda works like this:

LCD TV - Shader Off - 1080p Resolution

- Internal 240p = No native scanlines (typical stock image [bad])

- Internal 480i = No native interlacing (shows as 480p)

CRT PC Monitor - Shader Off - CRTSwitchRes On

- Internal 240p = Native scanlines (100% perfect)

- Internal 480i = No native interlacing (shows as 480p)

LCD TV - Shader On - 1080p Resolution

- Internal 240p = Proper scanline effect (‘look’ of perfect scanlines)

- Internal 480i = Proper interlacing effect (‘looks’ exactly like 480i)

CRT PC Monitor - Shader On - CRTSwitchRes On

- Internal 240p = Native scanlines (100% perfect)

- Internal 480i = Proper interlacing effect (‘looks’ exactly like 480i)

The really nice thing is that at 240p via SwitchRes on the CRT, the shader doesn’t show at all, so it’s like it’s not even on during those times, but when it switches to ‘internal’ 480i content, you get the interlacing effect automatically. Basically making it behave like a CRT TV would in a real hardware setup. I’m sure there are some minor inconsistencies that I’m not aware of between the shader effects and ‘real’ 240p & 480i - but for my money - it looks as perfect as I could ever ask for.

What’s more, is that with these effects in place, I was able to create shaders that (to me) really mimic what different cable connections look like. Here are some examples so you can see what I mean.

Gonna have to right click and “View Image” to get them to show properly.

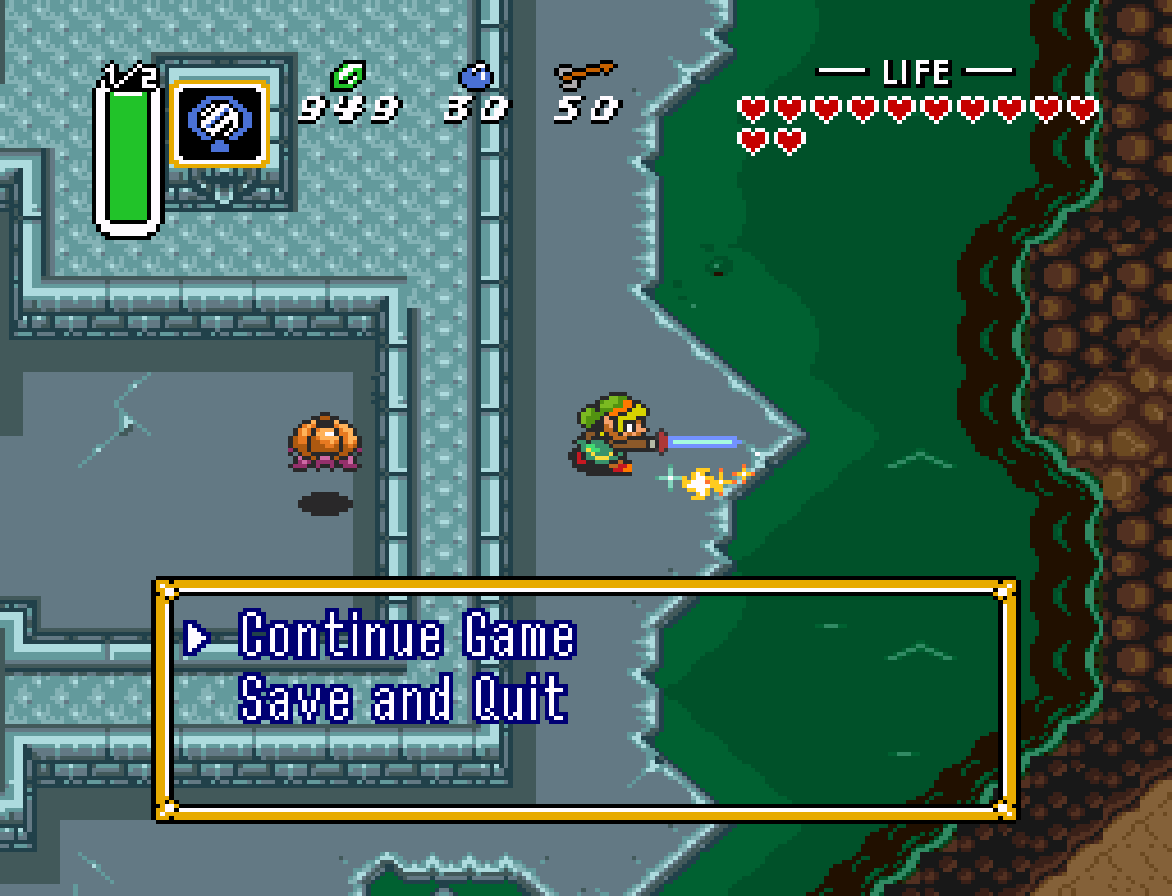

No Shader

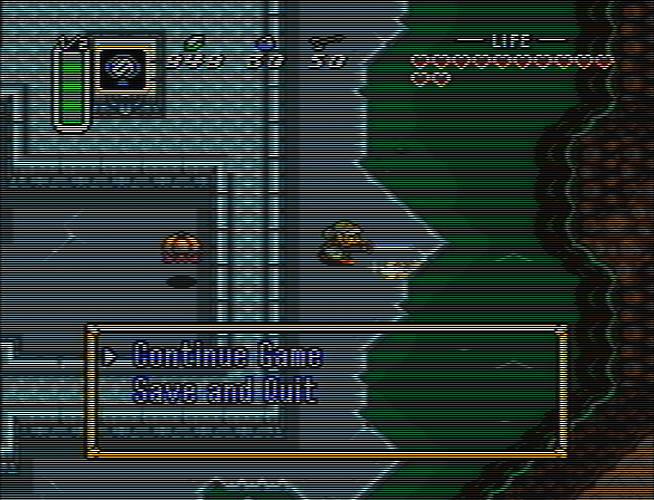

RF-VHF

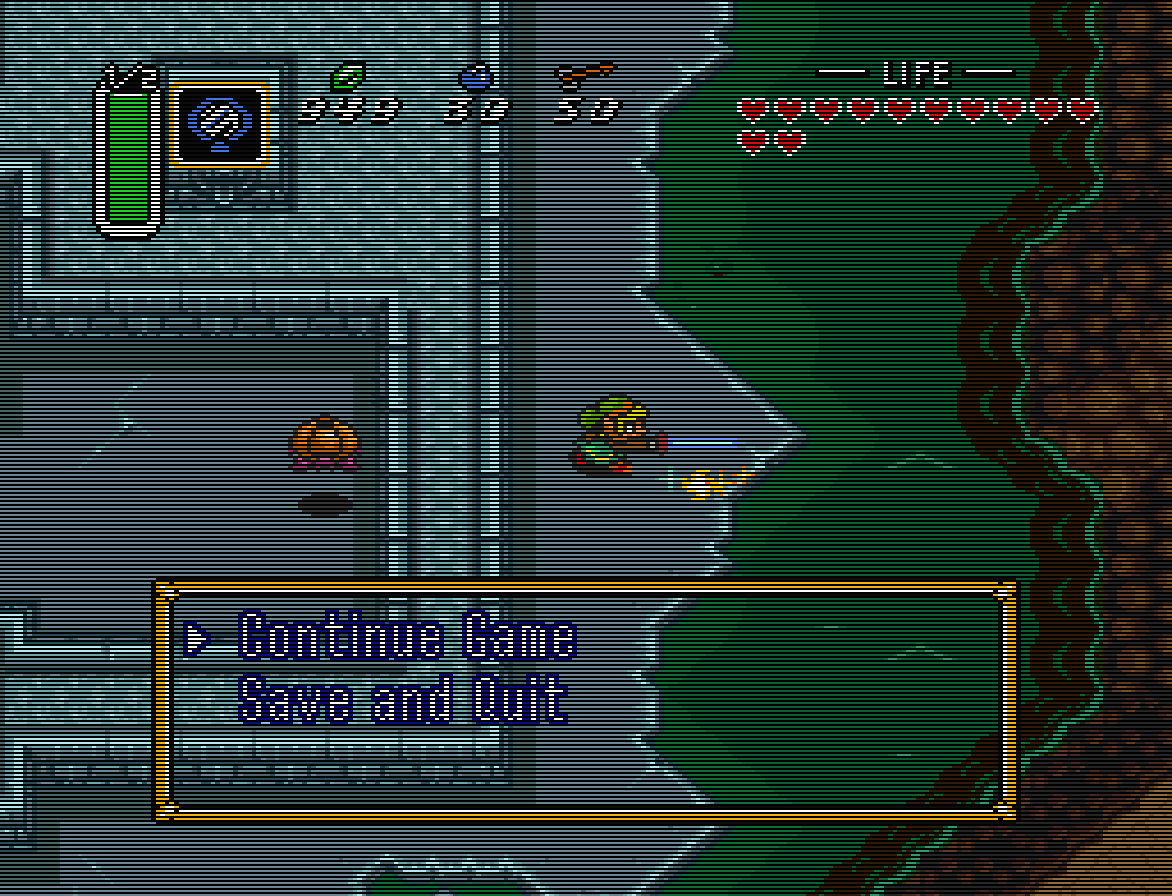

CVBS

YPbPr

I’m sure it’s pretty obvious that these aren’t a perfect match to the connections I labeled them as, it’s just sort of what I was able to throw together on top of interlacing.glsl - and for my purposes I think it looks very nice.

I’m sure someone better with shaders and more familiar with the ins/outs of these signals could do a much more ‘accurate’ job.

Anyway, I hope that all makes more sense. lol

EDIT: Replaced the Rayman 2 shots with Zelda LttP ones - I’m an idiot and wasn’t thinking that Rayman 2 is 640x480 hahaha.