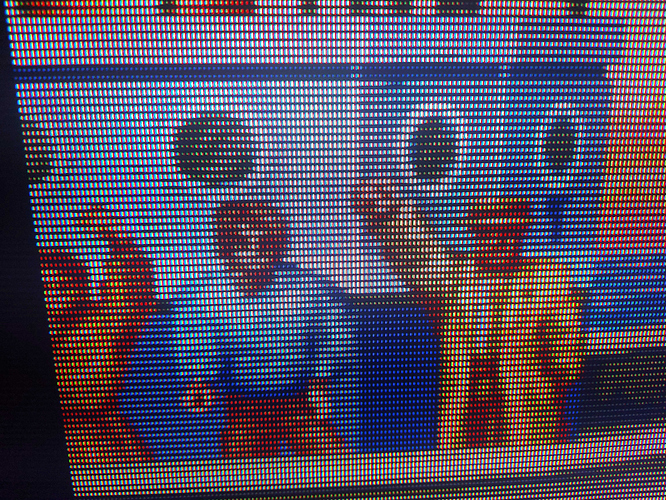

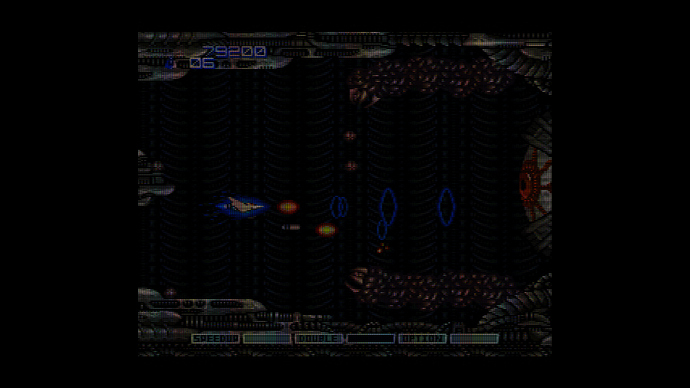

I prefer to say, each emulated CRT Phosphor triad.

Thanks for finally articulating this clearly because that’s exactly what is going on and it’s a miracle that it happens to align properly for slot masks, e.t.c…

and this very precise accident is why BBGGRRX or XBBGGRR doesn’t align properly with RWBG/WOLED displays.

Since this is the dawn of RGWB tandem OLED and new subpixel layouts, can you provide a simple blueprint or tips for adding new layouts or updating existing subpixel layouts in the near future?

It’s unfortunate that no one with an LG G5 has come forward to assist in testing and ensuring that they are getting properly aligned subpixel mask emulation but I guess ignorance is bliss and no one really cares much or respects the value of getting the CRT emulation right from the building blocks except us few.

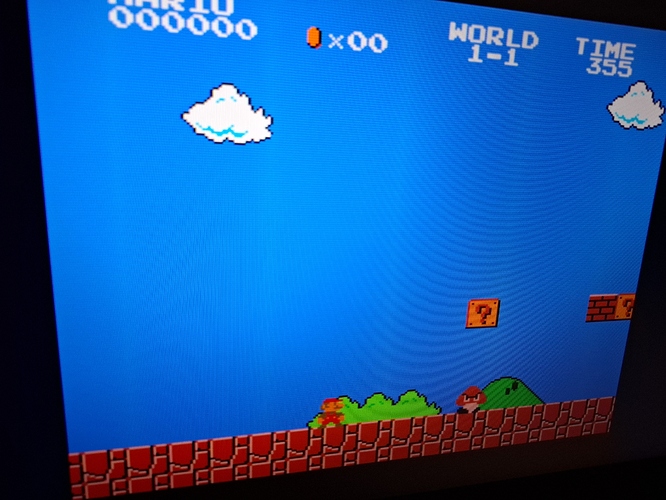

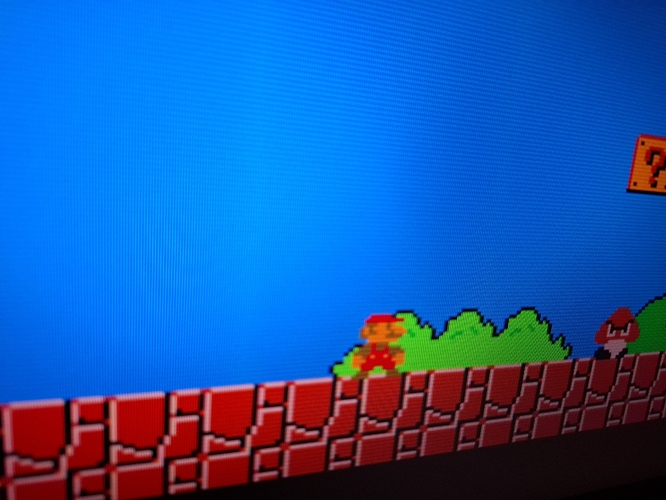

For what it’s worth, I downloaded a new nightly a couple days ago and converted all of the presets in my latest Epic preset pack which is based on Sony Megatron Colour Video Monitor v1 and from the 1 or 2 presets I tested, they looked pretty much identical to how they looked before the conversion.

I might still need to reinstate my custom modifications for some of the 8K masks to get additional 4K Masks at different heights that I liked but due to the changes in the Mask code that you introduced, I think I would try to see how they would look with the new Mask code before deciding if they need any further modification.

The reason I did that in the first place was because I preferred the stubbier 8K Masks over the more elongated 4K ones but then the 8K ones were no longer RGB/RBG/BGR.