It worked! You were right. I just needed to update Retroarch. The Lynx shader is displaying beautifully now! Thanks for your help. Right now I am using the Lite versions of all the shaders. I am planning on upgrading my pc in the near future. What is the minimum GPU I need in order to use the Advanced shaders without having any performance issues?

That depends on your monitor resolution. I think a GTX 1070 does pretty well at 1080 and a RTX 2060 at 4k.

I run either a 3050, 3070 Ti, or 4070 Ti depending on which PC I am using ATM.

It’s not an exact science, and Vulkan support helps.

There are some really decent budget GPUs (~$350) available right now and the news is that more should appear very soon.

I run a GTX 1070 at 4K and play N64, PS1, Dreamcast and Wii without any issues. Some cores send GPU usage into the 80% to 90% range though.

I’ve also tested a Radeon RX 6600 with similar results and a Radeon RX 6700XT which was flawless. My recommendations for a minimum GPU would be a Radeon RX 6650 XT, RX 5700 or GeForce GTX 1080, RTX 2060, RTX 3060 or higher. You can even take a look at the untested Intel Arc 750 if you’re feeling adventurous.

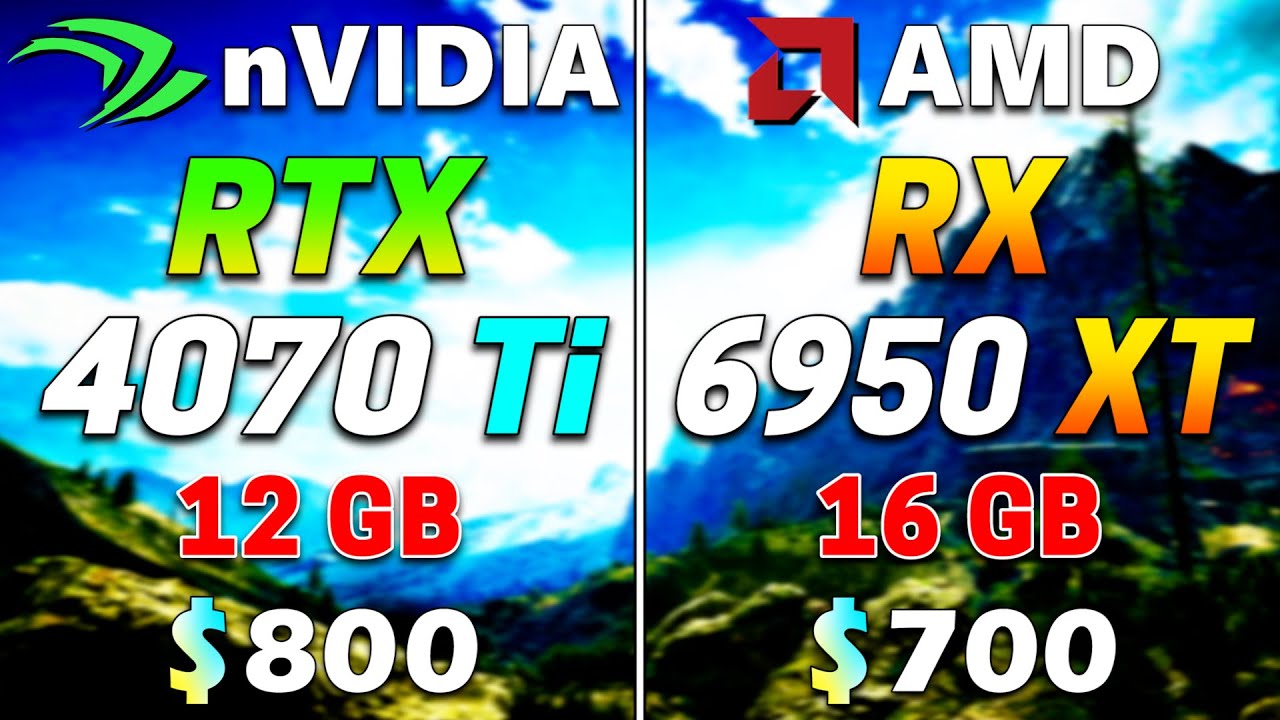

Ok good to to know. I’m going to be using my 65 inch OLED as my main monitor in my living room:) Ill be attempting my first pc build within the next couple weeks. I would like to get a 4070 TI. $800 is a little more than I’d like to spend on a GPU right now but I figure I’m going to have it for a long time so I might as well splurge a little. Any recommendations on where to find a good deal on GPUs?

My thoughts exactly. I neglected to mention any AMD offerings because there have been so many reported opengl issues.

Not really.  I usually search both Newegg and Amazon and go from there.

I usually search both Newegg and Amazon and go from there.

Good luck! It’s not as tough as it once was.  Just remember that you get what you pay for. Buy well known brands and spend more than you want to. (And don’t overclock unless you have bottomless pockets.)

Just remember that you get what you pay for. Buy well known brands and spend more than you want to. (And don’t overclock unless you have bottomless pockets.)

If you want a faster PC, buy faster parts.

You needn’t spend that much and still can get much more performance than you need, especially for retro gaming.

Even this is excessive performance for a RetroArch geared machine.

XFX Speedster MERC319 RX 6950XT Black Gaming Graphics Card with 16GB GDDR6 HDMI 3xDP, AMD RDNA 2 - RX-695XATBD9 https://a.co/d/ey1Jadi

XFX Speedster MERC319 AMD Radeon RX 6900 XT Black Gaming Graphics Card with 16GB GDDR6, HDMI, 3xDP, AMD RDNA 2 RX-69XTATBD9 https://a.co/d/7mQb6yt

XFX Speedster SWFT 319 AMD Radeon RX 6800 XT CORE Gaming Graphics Card with 16GB GDDR6 HDMI 3xDP, AMD RDNA 2 RX-68XTAQFD9 https://a.co/d/00Zkyyz

XFX Speedster QICK319 AMD Radeon RX 6800 Core Gaming Graphics Card with 16GB GDDR6 HDMI 3xDP, RDNA 2 RX-68XLALFD9 https://a.co/d/12hvmPa

XFX Speedster QICK319 Radeon RX 6750XT Ultra Gaming Graphics Card with 12GB GDDR6 HDMI 3xDP, AMD RDNA 2 RX-675XYLUDP https://a.co/d/cpnUOyw

All of these graphics cards outperform the GeForce RTX 3070/Ti in rasterization performance. Plus if you’re overspending on a graphics card in the hope that it will last longer in 2023, it might be a good idea to get something that at least has enough VRAM to run both the games of today as well as tomorrow.

As of right now even 12GB is cutting it close for a high end experience with the latest generation games.

So the GeForce RTX 4070Ti and all of its lower tiered brethren all have that shiny nVIDIA planned obsolescence built right in so it will be interesting to see how they fare against the 16GB+ GPUs in a couple years time.

The Radeon RX 6950XT beats the GeForce RTX 4070 in rasterization performance as well. The main downsides in going with an AMD GPU are lower power efficiency vs the new generation of nVIDIA Cards, relatively lower Raytracing performance and to a lesser extent worse GPU encoding performance at lower bitrates.

Another upside is lower CPU overhead drivers which means all else being equal an AMD GPU is going to be using less CPU than an nVIDIA GPU, this can mean that you can get away with a weaker CPU without experiencing issues for example hitches and lower 1% lows due to CPU bottlenecks.

RetroGames4K recently switched from an nvidia GPU to a Radeon 6800XT. I haven’t heard him complaining about performance in OpenGL or in any other scenario. As a matter of fact HSM Mega Bezel recommends and performs best using Vulkan. Guess who invented Vulkan? Also guess who’s GPUs have traditionally excelled and punched above their weight using Vulkan?

Trust me, it’s not the Green one. I am saying this as a fan of nVIDIA GPUs. They are my preferred brand traditionally and overall but for a long time now, they have been really trying hard to give their customers less and less while charging more and more so I’m not encouraging you to stand up against them, just to look objectively at the areas where you would get the most performance for your hard earned dollar and the answers are very clear.

If you have the extra dollars, you can’t go wrong pairing any current GPU with this CPU:

AMD Ryzen™ 7 5800X3D 8-core, 16-Thread Desktop Processor with AMD 3D V-Cache™ Technology https://a.co/d/5JqcP9f

This is what @RetroGames4K does with his Radeon RX 6800XT. I’m sure he can tell you all about his experience having switch from one brand to another:

https://www.youtube.com/live/VQ-fGKwjznY?feature=share

https://www.youtube.com/live/NHCuk5Di9FI?feature=share

https://www.youtube.com/live/pCoAJ17VK6s?feature=share

https://www.youtube.com/live/hnwCwBBPags?feature=share

Also, it’s good to look at some actual benchmarks before pulling the trigger.

You don’t want a situation where due to bad coding/porting or for whatever reason your brand new $800 GPU struggles to play a new title at 4K because it’s running up against a VRAM bottleneck.

Finally, overclocking isn’t only for when you can’t afford or don’t want to spend more money on higher performing PC parts. There are some folks who just want the best possible performance out of whatever they purchase and if it’s presented in a user friendly, foolproof manner, would easily click AutoOC in Ryzen Master or use the Auto OC/Curve Optimizer in MSI After Burner because it’s just easy to do and who knows, might provide you with a slightly better overall experience for little to no downside.

You can make your own decision but if you want every core to run successfully, (Not perform well… just run at all.) AMD has known issues with opengl. I cannot recommend a Ryzen until the issues go away. It should not be up to the emulator authors to accommodate AMD.

As far as overclocking goes. Safe is relative… ask the slew of AM5 users who have had their CPUs melt because of “approved” overclocking.

The Ryzen CPU I recommended is for the tried and tested older AM4 platform which you yourself has bought into and if I recall correctly had a good experience. The value it brings is exceptional and which emulators, cores or software is there that can’t or won’t run on AMD CPUs or GPUs? I’m finding it hard to locate any up to date empirical evidence to support this.

As for their issues with their latest platform, Buying into Gen1 of any product is always a risky endeavour, ask anyone who has had issues with 12pin PCE-e power connectors. I can only imagine what lies before once they learn from their missteps and improve things going forward.

Nearly all major brands have had issues with various products in the past, 1st gen Pentium FDIV bug anyone? 1st Gen AMD Phenom TLB bug? Sceptre, Meltdown Side-Channel attacks and more recently NZXT’s H1 fire hazard GPU Riser problem.

I do acknowledge your concerns though, I’m not dismissing them completely but you don’t always come across as fair and objective when assessing these things and value for money doesn’t seem to be a factor in your advice or decision making.

Also, I wasn’t endorsing or encouraging overclocking, I was just addressing your point about not “overclocking unless you have bottomless pockets”.

The current AMD issues don’t really have anything to do with overclocking or at least the type of overclocking that I was referring to and this is a more one off and exceptional situation while people have been successfully and safely overclocking various parts as well as running XMP profiles on their memory for over a decade at this point.

The issue with AMD’s current platform is not overclocking itself or memory overclocking. It is the fact that the voltages applied were above the official specifications and the motherboard manufactures were not properly complying with AMD’s recommendations and AMD’s QA/QC wasn’t robust enough to catch these issues before getting to market. So to write off the practice of “overclocking” especially XMP type memory overclocking which is the only way to get your RAM to run at it’s advertised speeds is a bit disingenuous and possibly misleading. You don’t buy 3200MHz DDR4 to run it at 2133MHz. AMD, Asus and all other manufacturers currently experiencing these AM5 issues are going to be taking care of their customers.

Also, the practice of motherboard manufacturers running components beyond spec didn’t start now or with AM5. Motherboard manufacturers have been looking for all sorts of ways to differentiate themselves in a crowded and highly competitive market and also like to make their benchmarks look shiny. That’s why things like MCE or Multi-Core Enhancement have been introduced.

The problem now is that both AMD and Intel have basically been using up all of their CPU headroom aiming for peak performance at all costs. Look at the space heater Core i9-13900KS for example, the thing throttles out of the box! You can’t even keep the CPU cool with a 360mm AIO!

AMD has no choice but to respond to this with a bit of limit pushing of their own leaving motherboard manufacturers with a bit less leeway to squeeze things beyond the default.

I know we’ve strayed off topic quite a bit so feel free to clean things up @HyperspaceMadness.

I have a Radeon 6800xt and also got a Ryzen 3600x and I don’t have issues with openGL. Runs well at least for me…

Citra and PCSX2 have crashing issues. It’s not opengl in general, something specific is missing.

The same thing happened to Nvidia card for a while until the drivers were sorted out.

IDK… maybe AMD has sorted them out now.

I seriously DO NOT think giving a brand new builder the advice to overclock a system is wise.

No matter how many words you use to justify it.

Just in case you didn’t know, last year Amd updated open GL Drivers…

I didn’t, I was just debunking what you said about overclocking and needing to buy faster parts if you wanted faster performance.

Additionally, while I wouldn’t generally advise a new builder to overclock their CPU or GPU, I would definitely advise any and every new builder to enable XMP as well as AMD’s alternative EXPO in order to get the RAM speeds they paid for.

You see there’s overclocking and then there’s “overclocking”. XMP is technically overclocking but it’s not really running the memory beyond it’s manufactured capabilities and specs. It is merely running the memory beyond the approved JEDEC specifications, which tends to lag behind the pace of technological advancement. It is also running the memory beyond the official supported (usually JEDEC matching) specifications of the memory controller of the CPUs which cannot be updated mid generation after a product is launched regardless of any new JEDEC approvals being attained.

Which is probably what broke things. If anyone who HAS had issues can report that they are gone then I will stand corrected.

Man, personally i wouldn’t suggest you to jump on a 4070ti for 800$, that is really a lot, it is a good performer but it’s vram capacity will eventually be taking away much performance the card could use.

I would suggest you to just straight go for 4070 FE if you can, i had both cards and at the end i returned the 4070 ti cause it wasn’t worth it. 4070 is a well balanced card in terms of performance per ram, performance per watt and performance per price. Also costs you 599 instead of 799.

It also has adaptor for old psu, so no need to buy new one, i have an sf600 watt psu and 4070 works great, while 4070 ti would need at least 700w and it doesn’t have an old psu adaptor i guess.

In rasterization if you don’t have a monitor with refresh rate higher than 144hz you don’t need to worry too much about it, every card with level from 4070 onwards delivers great rasterization especially with dlss on newer titles. And for games before 2 years ago, they basically can run at 4k 144 fps all the time so the 4070 will be great because it also delivers good ray tracing and dlss performance, and has also dlss3, while amd cards are all the way on rasterization, but they are a bit useless under 144hz monitors and they lack ray tracing performance and consume way more power, making them aging so fast whenever you feel to try ray tracing titles.

I have a 5800x3d for last am4 build, and while it is still on the overkill side (i would suggest the 5700x for the best bang for the buck premium cpu of am4 platform) it still helps making sure the fps lows are as high as possible, and i prefferred that because i usually think like 2 cycles gpu, 1 cycle system. So in my mind i prefer spending a good premium on the whole system in terms of cpu, mdb, memory and psu than gpu because usually go for 1 system and 2 gpu for 8 years, myself.

For emulation side, i play at 4k with 4070 and 5800x3d with 4k 144 hz screen and max emulation settings and shader presets with reflections, this system just can handle it all. Duckstation for ps1 is maxed out, pcsx2 also, wiiu and gamecube are maxed out, so i wouldn’t go for more if you tend to do heavy emulation cause you wouldn’t get much more than that.

If you like AMD, please consider to wait, unlucky amd decided to skip the mid segment in terms of gpus, releasing soon the 7600 8gpu, which is a very cheap one. If you can wait for them to release a 7800xt or 7800 gpu before deciding that would be better, cause at least those can have more efficiency per fps and more ram than 4070 and 4070ti if it’s your priority without spending too much. However they never talk about fsr3, and it’s quite a risk at the moment, while fsr 2 also has xess from intel delivering better images.

The comparisons between new gen 4000serie and last gen amd 6000 serie are really worthless, don’t give up on them for real. I had reviewed them all for the site of my region, and seriously you will be stomped at for the consumption asked for those performances. When you play retro gaming, for instance a SNES game with max shaders with reflections and smoothness, you will make your card work at around 50 to 70% cause it’s graphically intensive, and consuming 350 watts on a 6800 and 180 on a 4070 while having the same performance capped at 60hz for the game, it does make a huge difference in acoustics and environment.

Once you decided, enjoy it!

Well spoken my friend, including all that followed.

The only reason I bought a 4070 Ti was space constraints. I need the slot next to my GPU for my Thunderbolt card. (Which connects to my 5 external HDDs.) If I had three available slots to give to my GPU, I may have made another decision.

That being said, I spent $1500 on a 3070 Ti during the GPU crunch. I needed something that would comfortably run everything the Mega Bezel has to offer, with two full time 4K displays.

I was tired of developing 4K graphics on 1080 displays.  Sometimes need outweighs price considerations.

Sometimes need outweighs price considerations.

Oh geez you have given me a lot to think about here lol. This is my first build and I am still in the research phase. I definitely appreciate all of your input.

Im going to be using this as an HTPC connected to a 65 inch 4K OLED. I’ll be playing retro games using retroarch/mega bezels as well as modern PC games and using all my streaming services as well. Ideally I’d like to play games in the highest resolution possible. If I’m not getting the performance I want from the 4070TI while playing in 4K I was thinking I can use DLSS to help boost frame rates. I don’t have any experience with it and am not sure how well it works though. You make a good point about the 12gb limitation for future games. I wonder if it makes more since to wait and save a little more for a 16gb card to future proof myself a little. The only reason that I decided on Nvidia was because I heard the AMD cards historically have had some reliability issues. I’m pretty open to all options though. I was thinking about getting the Ryzen 7 5800X. You think the 5800X3D would be a noticeable upgrade?

Ok thanks for the advice. I’ll take another look at the 4070. Like I said, I’m still in the research phase right now. I’m not in a huge hurry and I want to make sure I am choosing all the right components. Seems like there are a lot of things I need to consider before I start making any purchases. All of this input has been really helpful!

There is no 1 right answer as each option, even at that $800 price point has compromises. Now if nVIDIA didn’t hold back on VRAM and priced their GPUs a bit closer to how they did in some generations past then there would have been no question but it is what it is.

The only GPU where you may not be compromising on is a 4080/4090 then you’re comprising your wallet. So we have to live with these tradeoffs. There’s nothing wrong with trying the 4070 as a starting point particularly where power consumption might also have to be considered. For emulation it will handle everything. You can play almost any PC game at 4K as well.

It’s a less risky investment when we already know that the 4070Ti’s performance level can be easily surpassed in the not to distant future given where we are already performance wise with the 4090. Just let that sink in for a moment. There’s a humongous gap in performance between an RTX 4090 and a 4070Ti. That indicates that nVIDIA is holding out on their customers by artificially limiting the performance of their cards, cutting back on important features like VRAM which will intice customers to need or want to upgrade sooner.

Look at Duimon’s example, he just upgraded to a 2070Ti 8GB version…errr sorry a 3070 Ti, only to need feel the need to upgrade again so soon after. I mean not everyone can sustain that but even if you’re in a position to do so, is that the wisest way to handle one’s finances when you know you’re basically being played and manipulated by a huge, greedy corporation?

And what set the bar is that AMD already had an entire line-up of high end graphics cards with 16GB VRAM. Why is nVIDIA only giving you 16GB on 3060 and 4060Ti level cards?

So it’s really hard to recommend a 4070 right now as well as a 4070Ti. AMD’s video encoding performance leaves a bit to be desired and you’re going to be streaming so what can I say, you might be better off living with nVidia’s compromises plus you do get better RayTracing performance.

I mainly suggested the other GPUs to show you that you didn’t have to spend $800 for that level of performance and experience. You will have to decide how much more saving some money, raytracing performance and video encoding quality and performance are to you as well as power consumption.

If you’re building a home theater PC, power consumption, heat and graphics card size are all important things to consider. The 4070 might hold an advantage here.

One thing you can consider is lowering settings if you need to if you wish to play exclusively at 4K because it may not be possible to play every game, especially the latest PC games at 4K with max settings, especially with a 12GB framebuffer and who knows what the case will be a few years down the road.

So you’re basically damned if you do, damned if you don’t. It’s not all gloomy though because each and every one of the GPUs we’ve touched on today is capable of providing you with a high quality gaming experience just that some are better in some areas than others and each has their strengths and weaknesses.

As for the CPU question, it’s no question, just get the 5800X3D and forget it. It will make a difference, especially where it counts in the minimum frames per second and can power any GPU available today at performance levels on par with the latest generation high end gaming chips.

man i had a gtx 980 before a 4070

It was 1080p 60hz cheap monitor aswell, i waited so long due to the crunch and got into other hobbies, luckly indie games, competitive games and especially emulation was great on the 980 still, even if i couldn’t do smooth or reflection presets.

Then i jumped to 4k 144 hz and 4070 and it really is perfect, i also have a mini itx case and 4070 was only the one fitting greatly.

I altough could review all the cards for amd and nvidia as a job so i know loads of them potentials, but in my region pricing was so bad compared to US, even when 4070 came out at 669 euros the 3070ti still was priced at more, crazy.

But hey never feel ashamed if you needed to buy something, its when you can wait that you should wait the best moment.